Sanjaya Mishra, Nitesh Kumar Jha and Kaushal Kumar Bhagat

2025 VOL. 12, No. 3

Abstract: The benchmarking toolkit for Technology-Enabled Learning (TEL) developed by the Commonwealth of Learning (COL) is designed to assess TEL practices in higher education institutions. This study evaluated the content validity, internal consistency, and inter-domain relationships of the toolkit using a survey of 355 practitioners across 21 institutions, yielding 90 usable responses. The survey also included open-ended questions for each domain and also for general feedback. Content validity was assessed with the Content Validity Index (CVI), which showed an overall score of 0.83, indicating high validity. Further, internal consistency was examined using Cronbach’s α, average inter-item correlation, and signal-to-noise ratio, with Cronbach’s α values ranging from 0.85 to 0.93 and inter-item correlations between 0.60 and 0.76. Domain-level mean scores were calculated, and inter-domain Pearson correlations revealed moderate to strong relationships (0.44 to 0.79), suggesting that while domains are related, they measure distinct aspects of TEL implementation. Institutions receiving structured support from COL generally achieved higher TEL scores, highlighting the positive impact of TEL practices. In addition, qualitative analysis of the open-ended questions showed that the benchmarking toolkit was useful in identifying gaps and working on those gaps by using an action plan to support digital transformation in higher education institutions. Overall, the findings demonstrate that the toolkit is a reliable and valid instrument for assessing TEL practices, providing a strong foundation for institutional improvement and further validation in larger samples.

Keywords: benchmarking, Commonwealth of Learning, technology-enabled learning, validation

Since the Covid-19 pandemic, digital learning has steadily advanced, with an increasing number of higher education institutions adopting technology to support teaching and learning in various forms. The pandemic experience accelerated the integration of technology, enabling institutions to enhance the quality of education. The term digital learning is often used interchangeably with expressions such as online learning, technology-enhanced learning, and technology-enabled learning. However, digital learning includes a wide array of technologies in teaching and learning, including the use of computer, mobile, digital video, interactive multimedia, networked learning, simulations, augmented reality/virtual reality, and artificial intelligence. Implementing these technologies require significant human and financial resources, meaning that educational institutions vary widely in their capacity to adopt them effectively.

In order to promote digital learning, the Commonwealth of Learning (COL) launched the Technology-Enabled Learning (TEL) initiative in 2015, before the Covid-19 pandemic. The core philosophy of TEL is that technology serves as a facilitator for pedagogical transformation and inclusive learning, rather than being an end in itself (Kirkwood & Price, 2016). It emphasises designing meaningful learning experiences supported by technology to improve access, equity, and quality in education. The initiative supports a range of technologies, with a primary focus on introducing blended learning in post-secondary institutions across the Commonwealth by integrating information and communication technologies (ICTs) into teaching and learning.

From a theoretical perspective, the TEL initiative is grounded in the Policy-Capacity-Technology (PCT) model (Mishra & Panda, 2020), which aligns closely with institutional development and learning-for-development paradigms. The model proposes that effective TEL implementation depends on three interlinked dimensions: 1) establishing appropriate institutional policies, 2) training staff to use technologies and tools for pedagogical purposes, and 3) ensuring that technologies are available and accessible to both students and teachers. This systemic approach reflects a ‘learning systems’ view of institutional change, where technology adoption becomes part of a broader process of educational innovation and capacity development (Laurillard, 2012).

COL, as an intergovernmental organisation, supports educational institutions in the Commonwealth to adopt the PCT model over a period of 2-3 years, typically in three phases: Phase 1-preparation, Phase 2-development, and Phase 3-maturation. The implementation process involves conducting a baseline survey, collaboratively developing a TEL policy, building staff capacity to design blended and online courses, delivering blended courses to students, evaluating these courses, and benchmarking TEL practices. Benchmarking plays a critical role in assessing and developing institutional capacity to leverage TEL for improved access to quality education and training.

TEL benchmarking starts with a collaborative institutional self-assessment using the TEL Benchmarking Toolkit to identify strengths and weaknesses (Sankey & Mishra, 2019). The benchmarking process helps in planning future improvements based on shared experiences in integrating ICT into teaching and learning. Self-assessment results are then validated through an external review, which helps normalise scores, allows for comparison with similar institutions, and facilitates the sharing of best practices to support continuous improvement. Over the years, COL has assisted multiple post-secondary institutions in adopting TEL benchmarking. This paper reviews the TEL Benchmarking Toolkit through the lens of the participants who have implemented it in their institutions. The primary goal was to validate and refine the indicators used to assess TEL implementation in an institution.

By combining the PCT model with benchmarking practices, this study conceptualises TEL development as a continuous institutional learning process, i.e., it links policy formulation, professional capacity-building, and technology adoption to quality assurance and organisational maturity. This integrated framework supports the broader ‘learning for development’ philosophy that underpins COL’s mission, emphasising that technology-enabled learning should contribute not only to educational quality but also to social inclusion and sustainable institutional growth.

The Australasian Council on Open and Digital Education (ACODE) started using benchmarking in 2007, and currently uses a version released in 2024 (Sankey et al., 2024). The latest version uses the following nine benchmarks in contrast to the previous version (Sankey et al., 2014) that had only eight benchmarks:

The last benchmark about learning spaces is the new one in the latest ACODE benchmarking framework. ACODE benchmarking is used in Australia as a compliance tool for the quality assurance regulations (Sankey & Padró, 2016). The Tertiary Education Quality and Standards Agency (TEQSA) defines benchmarking as a “structured, collaborative, learning process for comparing practices, processes or performance outcomes” that also serves “as a quality process used to evaluate performance by comparing institutional practices to sector good practice” (TEQSA, 2016, p. 1).

While the term “benchmarking” carries different meaning in different contexts (Alderman & Murray, 2025), it is generally seen as a method for continuous improvement and institutionally self-discovery. Volungevičienė et al. (2021) reviewed 20 different self-assessment tools for digital education practices, including COL’s TEL Benchmarking Toolkit. They found that while a range of criteria has been used, many tools lack a follow-up action plan. The review also covered self-assessment tools for both course/programme-level and institution-level assessment.

The UNESCO IITE (2023) report Benchmarking of open universities reviewed seven frameworks for technology enabled/enhanced learning. Quality assurance emerged as a common element, though it was not always explicit. Sankey and Padró (2019) noted that the COL TEL Benchmarking Toolkit focuses on ensuring that a base level of quality practices is present in TEL application. However, they also indicated that the “domains are indicative and built on the premise that each institution is on a journey towards quality practice and that individual institutions are at different stages along this journey” (p. 7).

COL’s Benchmarking Toolkit for TEL has ten domains covered within 44 indicators. Originally, the toolkit was developed through a literature review process, and consultation with the key stakeholders (those associated with implementing TEL with COL support) and based on the experiences of the ACODE’s benchmarking of technology-enhanced learning (Sankey, 2014; Sankey et al., 2014; Sankey & Padró, 2016, 2019). The ten domains are (Sankey & Mishra, 2019):

The indicators are scored from 1 to 5 from low levels of achievements to high levels of achievement, and while allocating the scores, the educational institution was expected to provide sufficient and suitable evidence to justify the scores for each of the indicators. These were reviewed by external experts in light of the rationale provided to arrive at the appropriate score to finalise the scores for each of the domains and the total for the overall benchmarking of TEL. The scores were interpreted using the following ranges:

4.5 > Exceptional

3.5-4.49 Established

2.5-3.49 Developing

1.5-2.49 Limited

< 1.5 Poor

TEL benchmarking is a collaborative process, with institutional teams agreeing on scores, narratives, and an action plan for TEL’s future. The toolkit was designed not to assess specific programmes or courses but to strengthen institutional capacity for delivering quality blended and online education effectively. Thus, the TEL Benchmarking Toolkit is a unique tool when compared to several other tools that also include specific issues related to programme or course level quality assurance issues (e.g., APEC, 2019; EADTU, 2012). The underlying assumption is that an effective TEL implementing institution will have the capacity to develop quality courses and programmes by integrating technology for appropriate delivery irrespective of the modality. Therefore, such institutions develop relevant documentation and guides to ensure that quality and standards are ‘fit for purpose.’ As indicated before, the TEL Benchmarking Toolkit has already been adopted by several institutions, and it is time to review the assumptions and progress made.

The study focused on the following objectives:

It is important to note that the toolkit was developed through a review of the existing benchmarking works, stakeholder consultation and a peer review process. The toolkit was deployed in several institutions during 2000-2025, and in order to further scale the implementation of the toolkit, it was necessary to understand the validity and reliability of the toolkit from user perspectives, especially those involved in the implementation of the toolkit in COL partner institutions.

The study adopted a mixed method approach to review the COL’s TEL Benchmarking Toolkit. First, domain-wise scores from 21 institutions that have so far adopted the toolkit were analysed. Data were extracted from publicly available TEL benchmarking reports on COL’s OASIS platform. These reports provided data for the ten domains and were based on the TEL Benchmarking Toolkit. Second, the 44 indicators in the toolkit were used to develop a survey questionnaire to assess their relevance for measuring a specific domain. For each of the indicators, participants rated their responses using a four-point relevancy scale (Yusoff, 2019):

1 = the indicator is not relevant to the measured domain.

2 = the indicator is somewhat relevant to the measured domain.

3 = the indicator is quite relevant to the measured domain.

4 = the indicator is highly relevant to the measured domain.

In addition to the 44 indicators, the survey also included open-ended questions for each domain and general open-ended questions to seek feedback from the participants. The survey questionnaire was distributed to 355 participants who were involved in TEL benchmarking in the 21 institutions. Only 90 usable responses were received. The scores were analysed using the content validity index (CVI) (Polit & Beck, 2006; Yusoff, 2019). The CVI was selected as the primary validation method because it directly quantifies expert consensus on the relevance and clarity of each indicator, making it particularly suitable for tool refinement based on expert judgment rather than behavioural testing (Polit & Beck, 2006; Zamanzadeh et al., 2015). In comparison, more advanced validation methods like confirmatory factor analysis (CFA) require large samples and assume multivariate normality, which were not feasible given the small sample size and the exploratory nature of this study.

Indicators with a CVI score greater than 0.5 were considered relevant, following the logic that if more than 50% of respondents rated an item as essential/relevant, it demonstrated content validity (Lawshe, 1975). The response rate for the survey was 25% with 90 usable responses, which meets the recommended minimum sample-to-item-ratio of 5-to-1 (Gorsuch, 1983; Memon et al., 2020). In additon to CVI analysis, we intended to perform factor analysis on the data. However, due to the low sample size (90), performing factor analysis for all 10 domains was not possible, as exploratory factor analysis typically requires larger samples to achieve stable factor solutions (de Winter et al., 2009; MacCallum et al., 1999). Preliminary attempts with 10-factor and 8-factor models showed unstable results. Therefore, we focused on assessing the internal consistency and inter-domain relationships, which are suitable alternatives when sample size is insufficient for stable factor analysis (de Winter et al., 2009; MacCallum et al., 1999).

Cronbach’s α was calculated for each domain to evaluate internal consistency. Cronbach’s α was used because it estimates the proportion of variance in observed domain scores that reflects the true score variance, which helps in determining the reliability and cohesiveness of indicators within each domain (Cronbach, 1951; Tavakol & Dennick, 2011). The values of Cronbach α, combined with average inter-item correlations, allowed us to identify domains where indicators were conceptually aligned and those requiring revision or simplification. Together, these metrics support the refinement of the TEL toolkit by identifying redundant, inconsistent, or weakly related indicators.

The average inter-item correlation was also computed to examine how closely items within each domain were related. Additionally, the signal-to-noise ratio (S/N) was reported, reflecting the ratio of true score variance to error variance. Next, Domain-level mean scores were computed by averaging the scores of all items within a domain, offering additional evidence of item homogeneity (Clark & Watson, 1995). The signal-to-noise ratio (S/N) was reported as a further indicator of reliability, reflecting the proportion of true score variance relative to error variance.

Domain-level mean scores were computed by averaging the scores of all items within a domain. These mean scores were then used to calculate inter-domain Pearson correlations to explore relationships between domains. Such inter-domain correlations provide insight into construct coherence and the degree to which related domains align conceptually, serving as a practical and valid approach when factor analysis is not possible due to small sample size (Tabachnick & Fidell, 2019; de Winter et al., 2009). All analyses were performed in R (version 4.5.1), using the psych package (Revelle, 2023).

For the qualitative component, responses to the open-ended questions were analysed thematically using Braun and Clarke’s (2006) six-step framework. The process involved data familiarisation, coding meaningful text segments, and grouping these into broader themes. Three major themes emerged from this process: (1) institutional improvement, reflecting how benchmarking revealed strengths and weaknesses (“It helped us see where we stand and what to improve”); (2) action-oriented planning, emphasising the usefulness of the Action Plan in providing timelines and responsibilities (“The plan gave us clear steps to move forward”); and (3) capacity and support, highlighting the need for ongoing training, infrastructure, and instructional design assistance (“More instructional design support and staff training are needed”). These qualitative insights enriched the quantitative findings, offering practitioner perspectives that helped explain how institutions experienced and acted on TEL benchmarking.

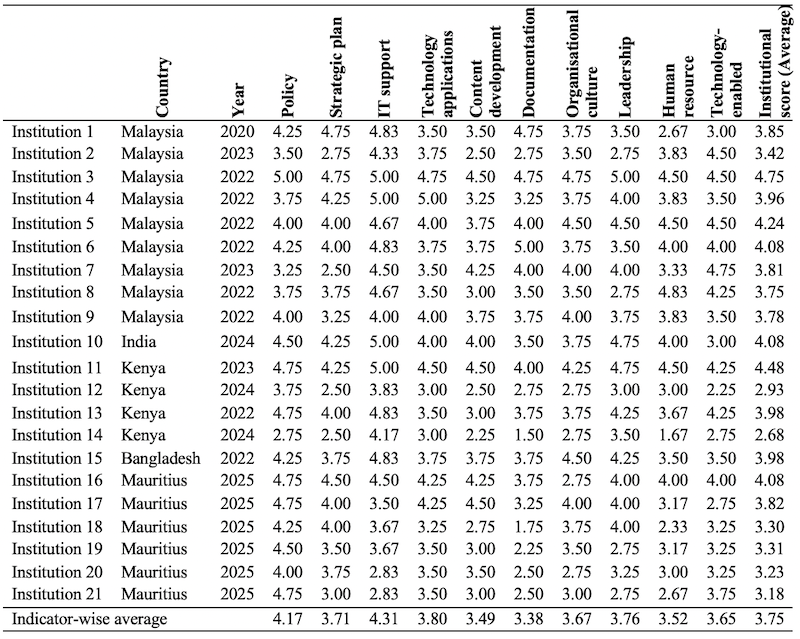

As part of the first objective of the study, we present comparative data about the ten domains covered in the toolkit. As indicated in the Methods section, the data for this analysis was extracted from actual TEL benchmarking reports of the partner institutions. The institutional score for TEL benchmarking across the 21 institutions ranged from 2.68 to 4.75 (Table 1). This distribution indicates that only one institution fell into the category of “exceptional” TEL implementation score (> 4.5), six were classified as “developing” (between 2.5-3.49), and the remaining 14 demonstrated established (between 3.5-4.49) TEL policy and practices. The institutions in Malaysia have implemented TEL very well demonstrating good established policy and practices. Interestingly, among nine institutions, only one (Institution 1) received systematic TEL implementation support from the COL. The Ministry of Higher Education in Malaysia encouraged all public higher education institutions to adopt the COL’s TEL benchmarking and develop their action plans accordingly. The other 12 institutions, located in various countries, received COL support in three phases. Six of these demonstrated “established” TEL practices, while the other six were in the “developing” category. In Mauritius, two institutions achieved “established” status, whereas four were still “developing”. Overall, the average scores of the 13 institutions supported by the COL indicate that such assistance positively contributed to advancing TEL implementation.

Table 1: Domain-Wise Score Comparison for the Institutions

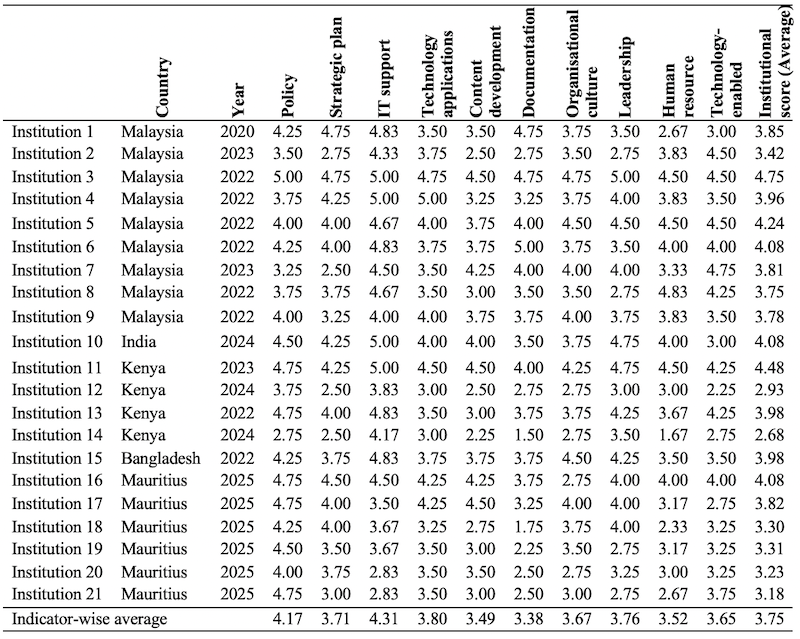

Content validity reflects how well indicators represent their respective domains. While full agreement among experts is considered the best, Lawshe (1975) suggested that 50% or more agreement can be considered acceptable. In this study, rather than consulting only subject-matter experts, we collected feedback from users who had prior experience with the TEL Benchmarking Toolkit. Thus, as indicated in the Methods section, the content validity analysis is based on the data collected from the 90 users of the toolkit responding to the 44 items in the 10 domains. The results in Table 2 show that 12 indicators achieved a CVI score above .90, 22 indicators scored within the range of .8 to .89, eight indicators fell within the range of .70 to .79, and only two indicators scored within the range of .60 to .69. These lowest-scoring indicators were from the domains of strategic plan and IT support. Specifically, one is related to financial support, which is normally always considered inadequate, and the other concerned a centralised approach to monitoring the ICT policy. Although both indicators scored above the .50 minimum, users may have considered them of low importance when benchmarking TEL in an institution. The overall CVI score of the indicators in the toolkit was .83, suggesting a high level of content validity. This indicates that the toolkit’s indicator generally captures their intended domains effectively, making it a reliable instrument for benchmarking TEL.

Table 2: Content Validity Score of the Benchmarking Indicators of the 10 Domains

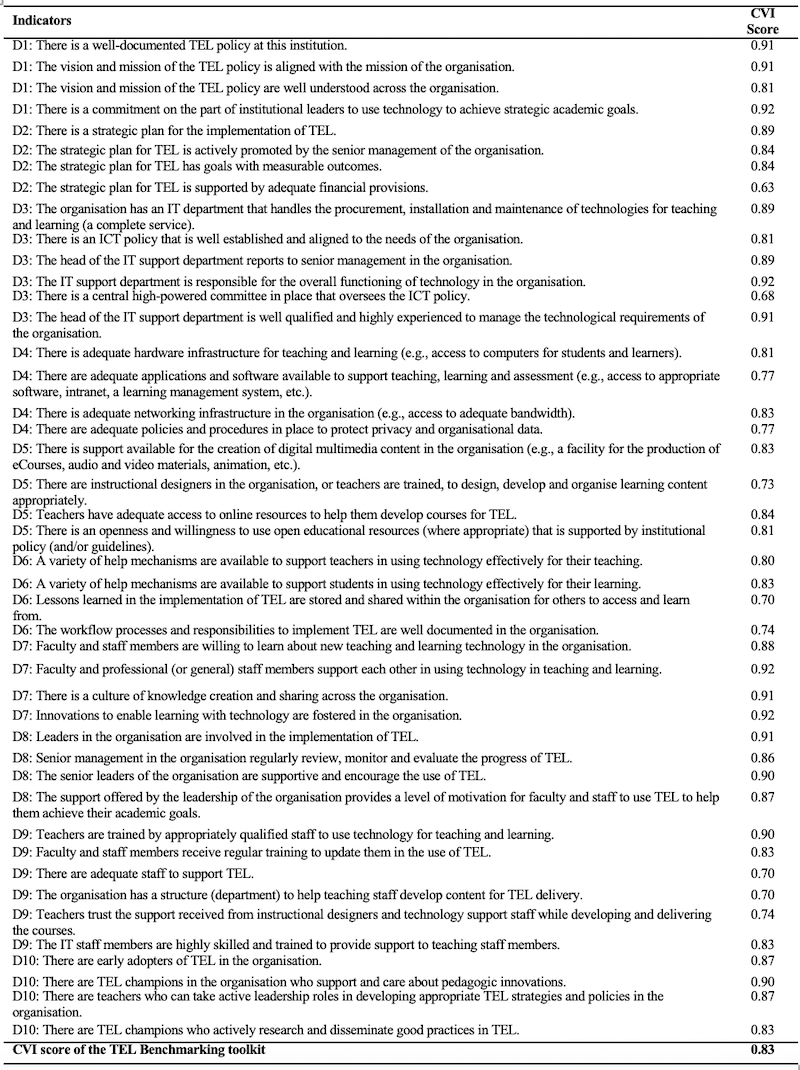

Table 3 presents the internal consistency estimates for each domain using Cronbach’s alpha, along with the number of items, average inter-item correlations, and signal-to-noise (S/N) ratios. The Cronbach’s alpha value of all domains ranged from .83 (D5) to .93 (D9). Cronbach’s alpha value beyond .80 is considered good (Tavakol & Dennick, 2011), hence the scales in this study can be considered highly reliable. Average inter-item correlations ranged from 0.53 (D1) to 0.76 (D2), suggesting that items within each domain were moderately to strongly correlated. This suggests that items measure similar underlying constructs while still contributing unique information. The S/N ratio further supported these results, with values ranging from 5.1 (D5) to 13.0 (D9). A higher S/N ratio reflects a greater true score variance relative to error variance, reinforcing that the measurement quality of these domains was strong. Taken together, these findings demonstrate that the domains were internally consistent and appropriate for further analyses.

Table 3: Internal Consistency of Domains

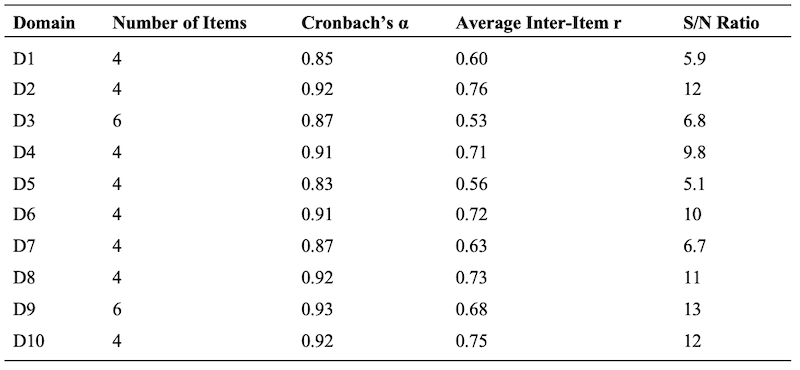

Table 4 shows the results of the Pearson correlations between domain-level mean scores. Results revealed moderate to strong (ranging between .44 to .79) positive relationships among most domains. These results suggest that while the domains were related and shared a common underlying construct, each domain also represented a unique aspect of the overall measurement framework. The strongest correlations were observed between D6 (Documentation) and D4 (Technology applications) (r = .79, p < .001) and between D6 (Documentation) and D9 (Human resource training) (r = .79, p < .001), indicating a particularly close relationship between these domains. Similarly, D1 (Policy) correlated strongly with D2 (Strategic plan) (r = .78, p < .001) and with D4 (Technology applications) (r = .74, p < .001). On the other hand, the weakest association was found between D2 (Strategic plan) and D10 (TEL Champions) (r = .44, p < .001), suggesting that these two domains shared less common variance. These findings imply that the domains captured overlapping but distinct dimensions of the construct, supporting the multidimensional structure of the instrument.

Table 4: Inter-Domain Correlation Matrix

*p < .001

The analysis of the responses to the open-ended questions revealed the following:

Taken together, the results demonstrate that TEL benchmarking is not only a valid measurement framework but also an effective institutional developmental tool. By linking quantitative results (validity, reliability, correlations) with qualitative evidence of institutional and individual impact, this study reinforces the potential of TEL benchmarking to drive sustainable TEL transformation.

The findings of this study show that the TEL benchmarking toolkit can be both a reliable and practical tool for higher education. The strong internal consistency and meaningful correlations between domains suggest that the framework captures how different areas of TEL are interconnected rather than treating them as separate pieces (Nunnally & Bernstein, 1994). Looking at the domain-wise results, most institutions performed better on infrastructure and policy compared to areas like pedagogy and staff capacity building. This aligns with earlier work showing that having the right technology in place does not automatically lead to real educational change (Kirkwood & Price, 2014; Laurillard, 2012). The moderate-to-strong inter-domain correlations also imply that effective TEL implementation depends on institutional systems working together, i.e., policy, leadership, and human capacity acting as reinforcing components rather than independent elements. In practical terms, this means that progress in one area, such as technology infrastructure, is unlikely to produce lasting benefits without parallel growth in faculty development and pedagogical innovation.

Cross-country variations in institutional scores further highlight the contextual nature of TEL adoption. Institutions in higher-income or better-resourced settings tended to achieve “established” scores, while smaller or developing institutions often remained in the “developing” stage. This reflects broader global disparities in digital readiness and access to resources (OECD, 2021; UNESCO IITE, 2023). However, qualitative responses revealed that even institutions with limited resources viewed benchmarking as empowering, i.e., it provided a structured way to identify priorities and justify requests for support from management or external agencies. This finding underscores that benchmarking is not just a measurement exercise but a developmental process that helps institutions chart realistic paths toward digital maturity.

Analysis of the open-ended responses also showed that most participants felt the benchmarking exercise was genuinely useful for identifying gaps and shaping institutional strategy. The Action Plan development stood out as especially valuable since it gave institutions clear steps and timelines, although participants also mentioned that stronger follow-up and communication would improve its effectiveness. Importantly, the reported actions after benchmarking such as updating policies, training staff, and introducing blended learning shows that the process can lead to visible change, which supports past studies on digital transformation in universities (Huang et al., 2020; Sangrà et al., 2012). These qualitative insights complement the quantitative data: domains related to Human Resource Training and Organisational Culture correlated moderately with Policy and Leadership, suggesting that institutional reforms were often translated into tangible professional development activities. This alignment between structural readiness and human capacity-building demonstrates how benchmarking outcomes can stimulate organisational learning and collective ownership of TEL initiatives.

From a development perspective, TEL benchmarking also links closely with the broader “Learning for Development” agenda promoted by the COL. The process contributes to institutional resilience by helping universities systematically assess their readiness for technology integration, anticipate future disruptions, and maintain learning continuity during crises like Covid-19. Moreover, by supporting inclusive access to quality digital education, benchmarking aligns directly with Sustainable Development Goal 4 (SDG4), which emphasises equitable and lifelong learning opportunities for all (UNESCO IITE, 2023). In many participating institutions, respondents noted that improved TEL capacity expanded access for remote learners and non-traditional students, demonstrating how benchmarking can indirectly promote educational equity and social inclusion.

Overall, this study highlights how TEL benchmarking can be more than just an evaluation exercise; it can act as a roadmap for digital transformation in higher education. At the institutional level, it gives leaders a structured way to set priorities, allocate resources, and align policies through a collaborative process (Redecker, 2017). For teachers, it encourages reflection, professional growth, and more confidence in using digital tools. At the systems level, the benchmarking process can serve as a learning mechanism that supports continuous improvement and strengthens institutional capacity to adapt, innovate, and sustain TEL practices over time. However, it is equally important to ensure that benchmarking remains adaptable to diverse contexts, especially for institutions in the Global South where constraints like infrastructure, funding, and staff availability continue to limit progress (Mishra & Panda, 2020; Selwyn, 2016). Overall, the integration of quantitative and qualitative findings presents a cohesive picture: the TEL benchmarking toolkit not only measures TEL maturity but also facilitates institutional learning and capacity development. It bridges the gap between evaluation and transformation and provides a pathway for universities to align digital innovation with inclusive educational development.

The study evaluated the COL’s TEL benchmarking toolkit and offers several practical takeaways for institutions and stakeholders aiming to strengthen TEL systems.

The findings highlight the need for continuous, evidence-based self-assessment to guide digital transformation. Benchmarking should not be treated as a one-time evaluation but as an ongoing reflective process that helps identify gaps, monitor progress, and sustain innovation. Institutions can also benefit from developing cross-functional TEL teams to ensure alignment between pedagogy, technology, and governance.

TEL benchmarking results can serve as a diagnostic tool to identify capacity gaps and prioritise investments. Funding schemes could focus on staff digital literacy programmes, instructional design support, and scalable infrastructure, especially for resource-limited universities. Regular benchmarking across institutions can also inform national digital education strategies and ensure alignment with SDG4 on quality education.

Agencies like the COL and other intergovernmental bodies can use benchmarking data to provide targeted technical support and facilitate peer learning networks. Encouraging regional collaboration and knowledge exchange can help contextualise TEL standards while promoting equitable access to technology-enhanced learning opportunities across diverse educational settings.

The study evaluated the COL’s TEL benchmarking toolkit using survey data from practitioners across 21 higher education institutions. Institutional TEL implementation scores varied, with most institutions demonstrating established practices, and a smaller number classified as developing or exceptional. Content validity analysis showed that the indicators effectively captured their respective domains, with an overall CVI of 0.83. Internal consistency of each domain was high and moderate to strong inter-item correlations, indicating that the items reliably measure their respective constructs. Inter-domain correlations ranged from moderate to strong, suggesting that while each domain assesses a distinct aspect of TEL implementation, they are meaningfully related.

The findings indicate that the toolkit is a reliable and valid instrument for benchmarking TEL practices in higher education institutions. Institutions receiving COL support generally achieved higher TEL implementation scores, highlighting the positive impact of structured guidance and support. In addition, the open-ended response showed that the TEL benchmarking exercise was useful for identifying gaps and creating institutional strategy to support digital transformation in higher education institutions. These results provide practical insights for policymakers and practitioners seeking to assess and improve TEL initiatives.

While the study has revealed the validity and usefulness of the toolkit, there are also some limitations. First, the sample size was limited, and the data came from a limited number of institutions, which might affect the generalisability of the findings. Future research with larger sample sizes and advanced statistical analyses (such as CFA) could further enhance the structure of the toolkit and strengthen its applicability across different educational contexts. The limited sample also means that variations between countries or institutional types (e.g., open universities vs. traditional universities) could not be fully explored. Future studies should therefore aim to include a broader range of institutions from different regions, possibly combining data from multiple benchmarking cycles to strengthen cross-country comparisons and improve external validity. Second, the study relied on self-report surveys which means responses could have been influenced by individual perceptions. However, this limitation has been addressed to some extent through the analysis of the actual audit of the institutional benchmarking data.

Alderman, L., & Murray, S. (2025). Benchmarking: Seeking best practice. New Directions for Evaluation. https://doi.org/10.1002/ev.20634

APEC (2019). Quality assurance of online learning toolkit. APEC. https://www.apec.org/publications/2019/12/apec-quality-assurance-of-online-learning-toolkit

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77-101. https://doi.org/10.1191/1478088706qp063oa

Clark, L.A., & Watson, D. (1995). Constructing validity: Basic issues in objective scale development. Psychological Assessment, 7(3), 309-319. https://doi.org/10.1037/1040-3590.7.3.309

Cronbach, L.J. (1951). Coefficient alpha and the internal structure of tests. Psychometrika, 16(3), 297-334. https://doi.org/10.1007/BF02310555

de Winter, J.C.F., Dodou, D., & Wieringa, P.A. (2009). Exploratory factor analysis with small sample sizes. Multivariate Behavioral Research, 44(2), 147-181. https://doi.org/10.1080/00273170902794206

EADTU (2012). Quality assessment for e-learning: A benchmarking approach (2nd ed). EADTU. https://e-xcellencelabel.eadtu.eu/images/documents/Excellence_manual_full.pdf

Gorsuch, R. (1983). Factor analysis. Routledge Classic Editions. https://api.pageplace.de/preview/DT0400.9781317564898_A24443831/preview-9781317564898_A24443831.pdf

Huang, R., Spector, J.M., & Yang, J. (2020). Educational technology: A primer for the 21st century. Springer Nature Singapore.

Johnson, S.J., Blackman, D.A., & Buick, F. (2018). The 70:20:10 framework and the transfer of learning. Human Resource Development Quarterly, 29(4), 383-402. https://doi.org/10.1002/hrdq.21330

Kirkwood, A., & Price, L. (2016). Technology-enabled learning implementation handbook. Commonwealth of Learning. http://hdl.handle.net/11599/2363

Kirkwood, A., & Price, L. (2014). Technology-enhanced learning and teaching in higher education: What is “enhanced” and how do we know? A critical literature review. Learning, Media and Technology, 39(1), 6-36. https://doi.org/10.1080/17439884.2013.770404

Laurillard, D. (2012). Teaching as a design science: Building pedagogical patterns for learning and technology. Routledge.

Lawshe, C.H. (1975). A quantitative approach to content validity. Personnel Psychology, 28(4), 563-575.

MacCallum, R.C., Widaman, K.F., Zhang, S., & Hong, S. (1999). Sample size in factor analysis. Psychological Methods, 4(1), 84–99. https://doi.org/10.1037/1082-989X.4.1.84

Memon, M.A., Ting, H., Cheah, J.H., Thurasamy, R., Chuah, F., & Cham, T.H. (2020). Sample size for survey research: Review and recommendations. Journal of Applied Structural Equation Modeling, 4(2), i-xx. https://jasemjournal.com/wp-content/uploads/2020/08/Memon-et-al_JASEM_-Editorial_V4_Iss2_June2020.pdf

Mishra, S., & Panda, S. (2020). Prologue: Setting the stage for technology-enabled learning. In S. Mishra & S. Panda (Eds.), Technology-enabled learning: Policy, pedagogy and practice, (pp. 3-16). Commonwealth of Learning. http://hdl.handle.net/11599/3655

Nunnally, J.C., & Bernstein, I.H. (1994). Psychometric theory (3rd ed.). McGraw-Hill.

OECD (2021). OECD Economic Outlook. OECD Publishing. https://doi.org/10.1787/edfbca02-en

Polit, D.F., & Beck, C.T. (2006). The content validity index: Are you sure you know what's being reported? Critique and recommendations. Research in Nursing & Health, 29(5), 489-497. https://doi.org/10.1002/nur.20147

Redecker, C. (2017). European framework for the digital competence of educators. DigCompEdu. Publications Office of the European Union. https://doi.org/10.2760/178382

Revelle, W. (2023). psych: Procedures for psychological, psychometric, and personality research (Version 2.4.5) [R package]. Northwestern University. https://cran.r-project.org/package=psych

Sangrà, A., Vlachopoulos, D., & Cabrera, N. (2012). Building an inclusive definition of e-learning: An approach to the conceptual framework. International Review of Research in Open and Distributed Learning, 13(2), 145-159. https://doi.org/10.19173/irrodl.v13i2.1161

Sankey, M. (2014, January). Benchmarking for technology enhanced learning: Taking the next step in the journey. In Proceedings of the 31st Annual Conference of the Australasian Society for Computers in Learning in Tertiary Education (ASCILITE 2014). https://research.usq.edu.au/download/cd6a95872cc422815143a5fcec1bc1e09dc025453c3ad14058d8dfa1cc5d4e3b/200576/ascilite2014_Sankey_Paper.pdf

Sankey, M., & Mishra, S. (2019). Benchmarking toolkit for technology-enabled learning. Commonwealth of Learning. http://hdl.handle.net/11599/3217

Sankey, M., & Padró, F.F. (2019, September). Seeing COL’s technology-enabled learning benchmarks in the light provided by the ACODE benchmarking process. In Pan-Commonwealth Forum 9 (PCF9), 2019. Commonwealth of Learning. http://hdl.handle.net/11599/3368

Sankey, M., & Padró, F.F. (2016). ACODE Benchmarks for technology enhanced learning (TEL) Findings from a 24 university benchmarking exercise regarding the benchmarks’ fitness for purpose. International Journal of Quality and Service Sciences, 8(3), 345-362. https://doi.org/10.1108/IJQSS-04-2016-0033

Sankey, M., Carter, H., Marshall, S., Obexer, R., Russell, C., & Lawson, R. (2014). Benchmarks for technology enhanced learning. ACODE. https://ro.uow.edu.au/asdpapers/549

Sankey, M., Marshall, S., McCarthy, S., Leichtweis, S., Selvaratnam, R., Adams, N., Joubert, L., & Ames, K. (2024). The ACODE Benchmarks for Technology Enhanced Learning (2nd ed). The Australasian Council on Open and Digital Education. https://doi.org/10.14742/apubs.2024.725

Selwyn, N. (2016). Education and technology: Key issues and debates (2nd ed.). Bloomsbury Academic.

Tabachnick, B.G., & Fidell, L.S. (2019). Using multivariate statistics (7th ed.). Pearson.

Tavakol, M., & Dennick, R. (2011). Making sense of Cronbach’s alpha. International Journal of Medical Education, 2, 53-55. https://doi.org/10.5116/ijme.4dfb.8dfd

UNESCO IITE. (2023). Benchmarking for open universities: Guidelines and good practices. UNESCO IITE. https://unesdoc.unesco.org/ark:/48223/pf0000389518

Volungevičienė, A., Brown, M., Greenspon, R., Gaebel, M. & Morrisroe, A. (2021). Developing a high-performance digital education system: Institutional self-assessment instruments. European University Association absl.

Yusoff, M.S.B. (2019). ABC of content validation and content validity index calculation. Education in Medicine Journal, 11(2), 49-54. https://doi.org/10.21315/eimj2019.11.2.6

Zamanzadeh, V., Ghahramanian, A., Rassouli, M., Abbaszadeh, A., Alavi-Majd, H., & Nikanfar, A.R. (2015). Design and implementation content validity study: Development of an instrument for measuring patient-centered communication. Journal of Caring Sciences, 4(2), 165-178. https://doi.org/10.15171/jcs.2015.017

Author Notes

Sanjaya Mishra is one of the leading scholars in open, distance, and online learning with extensive experience in teaching, staff development, research, policy development, innovation, and organisational development. With a multi-disciplinary background in education, information science, communication media, and learning and development, Dr Mishra promotes the use of educational multimedia, eLearning, open educational resources (OER), and open access to scientific information to increase access to quality education and lifelong learning for all. He has designed and developed award-winning online courses and platforms, such as Understanding Open Educational Resources, Commonwealth Digital Education Leadership Training in Action, and COLCommons. He currently serves as Education Specialist (TEL) at the Commonwealth of Learning (COL). He previously served as Director (Education) at COL, Director of Commonwealth Educational Media Centre for Asia, and Programme Specialist (ICT in Education, Science and Culture) at UNESCO. Email: smishra.col@gmail.com (https://orcid.org/0000-0003-3291-2410)

Nitesh Kumar Jha is a Post-Doctoral Fellow at the School of Learning Informatics at National Taiwan Normal University, Taiwan. He received his PhD from the Advanced Technology Development Centre, Indian Institute of Technology (IIT) Kharagpur, India. His research areas include computational thinking, learning analytics, educational technology. Email: nitesh.jha1092@gmail.com (https://orcid.org/0000-0002-5209-1683)

Kaushal Kumar Bhagat currently works as an assistant professor in the Advanced Technology Development Centre at the Indian Institute of Technology (IIT) Kharagpur, India. He also holds the position of Vice Chairman of the Centre for Teaching Learning and Virtual Skilling. He received his PhD from the National Taiwan Normal University in September 2016. He then served a two-year postdoctoral position at the Smart Learning Institute at Beijing Normal University. In 2015, Dr. Bhagat received the NTNU International Outstanding Achievement Award. He was also awarded the 2017 IEEE TCLT Young Researcher award. In 2020, he received the APSCE Early Career Researcher Award (ECRA) from the Asia-Pacific Society for Computers in Education. He was also awarded the 2022 Excellence in Distance Education Award (EDEA) by the Commonwealth of Learning (COL), Canada. He is an editor-in-chief of Contemporary Educational Technology (CET). He is also an editorial board member of several reputed international journals. He is a consultant for international organisations like the Commonwealth of Learning, UNESCO, etc. His research areas of interest include augmented reality, virtual reality, game-based learning, online learning, and technology-enhanced learning. Email: kkntnu@hotmail.com (https://orcid.org/0000-0002-6861-6819)

Cite as: Mishra, S., Jha, N.K., & Bhagat, K.K. (2025). Review and validation of the benchmarking of technology-enabled learning. Journal of Learning for Development, 12(3), 450-468.