Nantha Kumar Subramaniam

2026 VOL. 13, No. 1

Abstract: This study examines CodeMentor-AI, an AI-powered tool that delivers immediate, adaptive and context-aware feedback on programming tasks. It supports iterative practice and self-managed learning to better prepare ODL learners for graded assessment. In a pilot focus group with eight ODL programming students, participants reported rapid assistance, clear understanding, practical gains, sustained engagement and motivation, and increased confidence to tackle the graded assessment and collectively strengthened self-managed learning.

Keywords: AI-generated feedback, assessment, Open and Distance Learning (ODL), self-managed learning

Feedback in learning plays a pivotal role in supporting students' progress toward their educational goals by providing insights into areas for improvement and affirming their strengths. According to Hattie and Timperley (2007), effective feedback offers students actionable guidance that fosters a deeper understanding of the subject while encouraging self-regulated learning. Similarly, Nicol and Macfarlane-Dick (2006) emphasise that timely, specific, and constructive feedback enhances learning outcomes, boosts student confidence, and sustains engagement. By bridging the gap between current performance and desired outcomes, feedback not only motivates learners but also helps instructors adapt their teaching to address individual and collective learning needs. This dynamic process ensures that learning remains inclusive and adaptive, transforming feedback into a tool for continuous improvement and active support in the educational journey.

Open and Distance Learning (ODL) has become a cornerstone of modern education, offering learners the flexibility and accessibility to pursue their studies regardless of geographical, time, or personal constraints. This approach removes traditional barriers to education, such as time, place, and pace, allowing learners to engage with course materials from anywhere in the world and at their own convenience (Sociology Institute, 2024). Frontiers in Education (2024) highlighted the role of flexibility and personalisation in higher education, emphasising the importance of innovative technologies for creating inclusive learning environments. This approach has opened doors for a diverse group of learners who might not have access to traditional classroom-based education. However, while ODL offers significant benefits, it also presents unique challenges, particularly in delivering effective feedback—a vital component for promoting engagement, fostering deeper learning, and ensuring academic success.

In traditional educational settings, students benefit from real-time interaction with instructors and peers, enabling immediate feedback and collaborative problem-solving. In contrast, ODL learners often study in isolation, which can lead to feelings of disconnection and hinder timely clarification of doubts or reinforcement of concepts. This makes feedback delivery in ODL not only more complex but also crucial to the learner's progress and motivation. This becomes even more critical in technical disciplines such as engineering and computer programming, which rely heavily on iterative problem-solving, precision, and a thorough understanding of concepts. These requirements are best supported through timely and targeted feedback. Steinert et al. (2024) further underscore this by presenting an open-source automated feedback system for STEM education, highlighting the importance of personalised feedback mechanisms to effectively support learners in these fields.

In this context, self-managed learning—a process where learners take responsibility for planning, monitoring, and evaluating their own learning—becomes an essential strategy. It empowers ODL students to use feedback as a tool for reflection and self-improvement, helping them identify strengths, address weaknesses, and set actionable goals. Effective feedback systems in ODL environments must therefore be designed to be timely, constructive, and easily accessible, guiding learners toward achieving their academic objectives while maintaining their motivation and sense of connection to the learning process.

However, conventional feedback mechanisms in ODL environments often face challenges in addressing the needs of a diverse and dispersed learner population. Common issues include delays in feedback delivery, variability in quality, and a lack of personalised responses. These shortcomings can impede learners' progress, diminish their confidence, and lower their overall satisfaction with the learning experience.

Feedback in digital learning environments can take many forms—digital tutors, chatbots, or automated comments generated by the system. Regardless of delivery medium, what matters most is that the feedback is timely, specific, and aligned with the learning goals. Prior automated (non-AI) systems show learning gains but tend to be narrow and course-specific, relying on simple compare-to-solution checks and dashboards rather than supporting problem-solving processes or self-regulated learning across contexts (Cavalcanti et al., 2021).

Recent feedback systems reported in the literature illustrate how automation and AI are used to support student learning. Hobert and Berens (2024) developed a chatbot-based “digital tutor” that offers content access, formative quizzes, and instant feedback during lectures to support scalable, continuous learning. Jasin et al. (2023) developed an affective, question-answering chatbot trained on instructor-immediacy techniques for an online chemistry course, where students experienced round-the-clock support and more human-like engagement. Hu et al. (2023) combine robotic process automation and predictive analytics in an Intelligent Tutoring Robot to deliver automatic responses and an early-warning mechanism for distance learners. In writing instruction, automated argumentation feedback paired with a social-comparison led students to produce more convincing texts (Wambsganss et al., 2022).

Despite many automated feedback tools being developed, a core gap remains: most systems still prioritise answer-matching and generic, one-size-fits-all hints over process-level guidance that adapts to a learner’s ability, misconceptions, and pace. As a result, feedback is poorly calibrated in difficulty and granularity, rarely builds on prior attempts, offers limited scaffolding and too often does not prepare students for summative assessment (Cavalcanti et al., 2021; Lee & Moore, 2024).

Positioned against these gaps, this study targets two objectives:

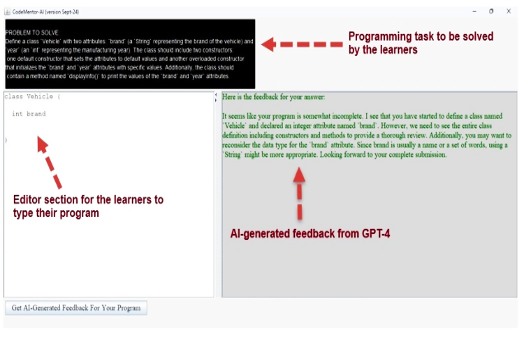

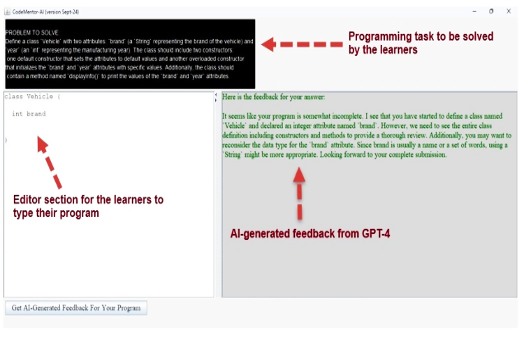

CodeMentor-AI, developed by the author, is an application powered by a large language model backend, GPT-4 (OpenAI, 2023), designed to provide learners with immediate and adaptive feedback (see Figure 1). GPT-4 is integrated into CodeMentor-AI via an Application Programming Interface (API), enabling CodeMentor-AI to generate immediate, context-specific, and adaptive feedback by analysing learners’ submissions and providing tailored guidance to them.

CodeMentor-AI presents an innovative interface powered by GPT-4, offering a new approach to automated learning. It integrates predefined Java programming tasks with dynamic, contextual feedback mechanisms. Unlike conventional platforms where students submit open-ended solutions, this application provides guided learning through meticulously designed tasks that focus on building specific programming skills and concepts.

The predefined tasks are structured to address particular learning outcomes, promoting a step-by-step mastery of programming skills. The application evaluates submissions against well-defined criteria, assessing not only correctness but also coding style, efficiency, and adherence to best practices. Feedback is tailored to the learner’s proficiency level: beginners receive foundational explanations and detailed analyses of errors, while advanced learners are offered optimisation suggestions and alternative approaches. This adaptive feedback mechanism evolves alongside the learner’s progress, encouraging iterative refinement and fostering a deeper understanding of programming concepts. In CodeMentor-AI, the feedback mechanism identifies gaps, reduces cognitive load, and provides corrective guidance.

By mirroring the taxonomy and criteria of the graded assignment, it enables targeted, iterative practice that prepares learners for summative assessment, while strengthening self-managed learning as they resubmit, reflect, and refine within a structured pathway.

The tool was piloted in September 2024 at Open University Malaysia (OUM) in a core Open and Distance Learning (ODL) Java programming course for the Diploma in Information Technology. Twenty-four students used the system in a voluntary manner.

The course was coordinated by the author. Students first used CodeMentor-AI as part of their self-managed learning process before attempting their graded assignment. The problem they solved in CodeMentor-AI aligned with the same skill taxonomy as the assignment question. This mapping provides learners with a structured progression, building their confidence and readiness to tackle the assignment. By allowing students to practise and refine their skills with immediate feedback, the application not only enhances their understanding but also bridges the gap between learning and assessment, ensuring they feel well-prepared for their academic tasks.

The students can submit their solutions as many times as they wish, allowing them to engage in an iterative learning process. This flexibility encourages learners to experiment with different approaches, refine their coding skills, and address errors without the pressure of penalties for incorrect submissions. Each submission is evaluated by the application, which provides immediate, tailored feedback to guide students toward improvement. This iterative model promotes a growth mindset by emphasising learning and mastery over mere correctness, enabling students to build confidence and develop a deeper understanding of programming concepts through repeated practice and gradual progress.

At the end of the semester, a pilot evaluation was conducted using a focus group with eight students, randomly picked, who voluntarily agreed to participate in order to examine the perceived benefits of CodeMentor-AI and how it supports self-managed learning. The author facilitated the session with a semi-structured interview guide, allowing flexible probing while ensuring key topics were addressed. This approach fosters rich qualitative insights by inviting students to share detailed reflections and respond to one another’s views (Greenbaum, 1998).

Ethical procedures were observed, including informed consent, voluntary participation, confidentiality, and neutral facilitation. These safeguards protected participants’ rights and privacy while enabling their feedback to inform improvements to the learning tool and experience. The next section presents the findings from the focus group.

Students’ feedback from the focus group discussions indicates a positive reception. They valued the immediate, context-aware feedback and the iterate–resubmit workflow. Students reported clearer understanding of targeted lesson concepts, practical gains in code structuring and debugging, and faster progression—findings consistent with evidence that personalised feedback improves learning outcomes (Kochmar et al., 2020). The students also described increased confidence in completing the graded assignment after working through CodeMentor-AI’s problem-solving session. For self-managed learning, CodeMentor-AI helped students stay engaged on task without consulting extra resources by enabling them to track, evaluate, and reflect on their progress within the tool.

The implementation of CodeMentor-AI highlighted several key insights into both students’ and academics’ receptiveness to a new teaching and learning approach and the integration of technology in the learning process. The key observations were as follows:

Observation #1: Feedback as a Motivator for Learning

Feedback proved to be a crucial element in enhancing student engagement and motivation. Providing timely, relevant, and constructive feedback not only helped learners improve their understanding and performance but also boosted their confidence in addressing academic challenges. The application illustrated that consistent and accessible feedback was a strong motivator, instilling a sense of accomplishment and promoting a proactive attitude toward learning.

Observation #2: Iterative Learning Through Multiple Submissions

The implementation of the application revealed that providing students the flexibility to submit their solutions multiple times fostered an iterative learning approach. This process encouraged students to experiment with different strategies, refine their understanding, and progressively improve their work based on the immediate feedback provided by the application. By focusing on learning as a continuous process rather than a one-time evaluation, students demonstrated increased confidence and a deeper engagement with the material, which is essential for mastering complex programming concepts.

Observation #3: Alignment of Practice Tasks with Learning Outcomes

Another important takeaway was the significance of aligning practice tasks with the same taxonomy of skills as graded assignments. This alignment gave students a clear roadmap, bridging the gap between self-managed learning and formal assessments. The application’s ability to guide learners through curated challenges that mirrored the complexity and scope of assignment questions enhanced their preparedness and confidence. This approach also highlighted how well-structured tasks, paired with targeted feedback, can support skill progression and reduce the anxiety often associated with high-stakes assessments.

Observation #4: Tailored Feedback and Structured Learning with CodeMentor-AI

CodeMentor-AI is more effective than ChatGPT in this context because it provides a structured, task-oriented learning experience with predefined programming challenges, ensuring targeted skill development. Unlike ChatGPT's open-ended, general responses, CodeMentor-AI delivers dynamic, context-specific feedback tailored to each task, offering clearer guidance and minimising confusion for learners.

In future iterations, CodeMentor-AI could start with a short diagnostic pre-test to gauge each learner’s starting point, then select or generate tasks at an appropriate difficulty level and adapt them as the learner progresses. Feedback would be tuned to that profile—more step-by-step hints and structured scaffolding for beginners, and higher-level cues with lighter guidance for advanced learners—so challenge rises in tandem with demonstrated mastery. Expanding the number and variety of tasks would significantly enhance its educational value by catering to different skill levels and learning outcomes—without losing the tool’s focus on preparing students for assessment.

The tool can extend beyond programming by swapping in domain-specific problems (e.g., accounting cases or business analytics scenarios), enabling broader use with minimal redesign.

Cavalcanti, A.P., Barbosa, A., Carvalho, R., Freitas, F., Tsai, Y.S., Gašević, D., & Mello, R.F. (2021). Automatic feedback in online learning environments: A systematic literature review. Computers and Education: Artificial Intelligence, 2, 100027. https://doi.org/10.1016/j.caeai.2021.100027

Frontiers in Education. (2024). Flexibility in higher education: Personalization and innovative technologies for inclusive learning. Frontiers in Education, 9, Article 1347432. https://doi.org/10.3389/feduc.2024.1347432

Greenbaum, T.L. (1998). The handbook for focus group research. SAGE Publications. https://doi.org/10.4135/978141298615

Hattie, J., & Timperley, H. (2007). The power of feedback. Review of Educational Research, 77(1), 81-112. https://doi.org/10.3102/003465430298487

Hobert, S., & Berens, F. (2024). Developing a digital tutor as an intermediary between students, teaching assistants, and lecturers. Educational Technology Research and Development, 1-22. https://doi.org/10.1007/s11423-023-10293-2

Hu, Y-H., Fu, J-S., & Yeh, H-C. (2023). Developing an early-warning system through robotic process automation: Are intelligent tutoring robots as effective as human teachers? Interactive Learning Environments, 1-14. https://doi.org/10.1080/10494820.2022.2160467

Jasin, J., Ng, H.T., Atmosukarto, I., Iyer, P., Osman, F., Wong, P.Y.K., Pua, C.Y., & Cheow, W.S. (2023). The implementation of chatbot-mediated immediacy for synchronous communication in an online chemistry course. Education and Information Technologies, 28(8), 10665-10690. https://doi.org/10.1007/s10639-023-11602-1

Kochmar, E., Vu, D.D., Belfer, R., Gupta, V., Serban, I.V., & Pineau, J. (2020). Automated personalized feedback improves learning gains in an intelligent tutoring system. In S. Isotani, E. Millán, A. Ogan, P. Hastings, B. McLaren, & R. Luckin (Eds.), Artificial intelligence in education. 21st international conference, AIED 2020, Ifrane, Morocco, July 6–10, 2020, Proceedings, Part II (Vol. 12164, pp. 140-146). Springer. https://doi.org/10.1007/978-3-030-52240-7_26

Lee, S.S., & Moore, R.L. (2024). Harnessing generative AI (GenAI) for automated feedback in higher education: A systematic review. Online Learning, 28(3), 82-104. https://doi.org/10.24059/olj.v28i3.4593

Nicol, D.J., & Macfarlane-Dick, D. (2006). Formative assessment and self-regulated learning: A model and seven principles of good feedback practice. Studies in Higher Education, 31(2), 199-218. https://doi.org/10.1080/03075070600572090

OpenAI. (2023). GPT-4 technical report. https://openai.com/research/gpt-4

Sociology Institute. (2024). Enhancing equity and access through open and distance learning. Sociology of Education. https://sociology.institute/sociology-of-education/enhancing-equity-access-open-distance-learning/

Steinert, P., Nguyen, H.T., & Sharma, N. (2024). Lessons learned from designing an open-source automated feedback system for STEM education. arXiv Preprint. https://arxiv.org/abs/2401.10531

Wambsganss, T., Janson, A., & Leimeister, J.M. (2022). Enhancing argumentative writing with automated feedback and social comparison nudging. Computers & Education, 191, 104644. https://doi.org/10.1016/j.compedu.2022.104644

Author Notes

Nantha Kumar Subramaniam is a Professor at Open University Malaysia (OUM) with over 20 years of experience in computing, specialising in intelligent learning systems, natural language processing, and Computer Science Education. He previously served as Adviser for Technology-Enabled Learning at the Commonwealth of Learning (COL), Canada. Nantha has received numerous awards for his contributions to digital education, including the Best E-Learning Product at the International University Carnival on E-Learning (IUCEL) 2021 and multiple Gold Medals at Asian Association of Open Universities (AAOU) conferences for innovations in adaptive and intelligent learning systems. He is currently the Dean of the Faculty of Computing and Analytics at OUM, where he continues to lead initiatives in AI-driven learning, digital innovation, and open and distance education. Email: nanthakumar@oum.edu.my (https://orcid.org/0000-0002-5870-5949)

Cite as: Subramaniam, N.K. (2026). CodeMentor-AI: AI-powered feedback application for programming in open and distance learning. Journal of Learning for Development, 13(1), 198-204.