Nantha Kumar Subramaniam and Santhi Raghavan

2025 VOL. 12, No. 3

Abstract: This study explores the integration of an AI-powered feedback system within Open University Malaysia’s assessment ecosystem to enhance formative assessment in Open and Distance Learning (ODL). Implemented across 12 first-semester courses, the system provided timely, personalised, and constructive feedback on student assignments. Findings from student surveys indicated high satisfaction and intention to reuse the system. The results underscore the system’s effectiveness in supporting self-regulated learning and reducing instructors’ workload, marking a significant innovation in digital assessment practices.

Keywords: AI-generated feedback, formative assessment, Open and Distance Learning (ODL)

Formative assessment is an ongoing process used by educators to monitor student learning and provide feedback that guides instructional adjustments (Black & William, 1998). It differs from summative assessment by focusing on improvement rather than final evaluation. Through timely and constructive feedback, formative assessment helps students identify their strengths, address learning gaps, and enhance their performance. This paper reports on the implementation of an AI-powered feedback system to enhance the formative assessment in the context of ODL, and its acceptance by the faculty and students.

Formative assessment has long been recognised as a key pedagogical strategy for improving student learning, with feedback serving as its central component. Feedback in formative assessment plays a critical role in guiding students toward achieving their learning goals by assessing and identifying areas for improvement and reinforcing strengths. Effective feedback provides students with actionable insights, promoting a deeper understanding of the subject and fostering self-regulated learning (Hattie & Timperley, 2007). It enables learners to close the gap between their current performance and desired outcomes, thereby enhancing motivation and engagement (Sadler, 1989). Specific, timely, and constructive feedback has been shown to improve learning outcomes and build student confidence (Nicol & Macfarlane-Dick, 2006), and when learners are actively engaged, they can effectively acquire the learning objectives and desired skills (Pinto & Izquierdo, 2024). Moreover, formative feedback helps instructors tailor their teaching strategies to address common misconceptions and individual learning needs, thereby creating a more inclusive and adaptive learning environment (Shute, 2008). As an ongoing process, feedback facilitates continuous improvement and ensures that assessment contributes not only to evaluating learning but also to actively supporting it (Black & Wiliam, 1998). Formative feedback enables learners and educators to enhance the teaching-learning process, promoting improved quality, adaptability, and sustainability (Nguyen & Tuamsuk, 2022).

Traditional feedback mechanisms often suffer from delays and inconsistencies, which can negatively affect student engagement, motivation, and overall learning outcomes (Luckin et al., 2016). These limitations may hinder learners’ progress, undermine their confidence, and reduce satisfaction with the learning experience (Choy & Quek, 2016). AI-powered tools offer a promising solution by delivering automated, context-aware feedback that not only enhances conceptual understanding but also supports self-regulated learning (Zawacki-Richter et al., 2019). In this context, recent literature highlights a growing need for AI-based feedback systems that can provide scalable, timely, and personalised responses to student assignments. For instance, FlowHunt (2024) highlights how AI-powered feedback tools provide real-time, individualised insights, enhancing learning outcomes in expansive educational settings. Similarly, Analytikus (2024) discusses the role of AI in automating assessment and feedback processes, enabling educators to efficiently manage large volumes of student work while maintaining personalised support. Another relevant study is EvaluMate, an AI-enhanced peer review system that leverages ChatGPT to support students in generating constructive feedback in writing classrooms by providing scaffolded guidance (Guo, 2024).

The present study investigates the integration of an AI-powered feedback system within Open University Malaysia’s assessment ecosystem to enhance formative assessment in Open and Distance Learning (ODL). This study holds significant relevance and contributes to ongoing discourse on enhancing feedback practices in digitally mediated education.

The OUM implemented the AI-powered Assignment Feedback System (AI-AFS) through a research collaboration with Studiosity Pty Ltd (https://www.studiosity.com/). AI-AFS is an online platform that provides ethical, personalised, and immediate feedback on students’ draft assignments, prior to final submission for grading.

AI-AFS bridges the gap between the need for immediate feedback and the constraints of Open and Distance Learning (ODL), offering 24/7 access to guidance on key aspects such as structure, language, argumentation, referencing, and grammar. This flexibility is particularly valuable for ODL learners, who often balance academic responsibilities with work and family commitments and require an inclusive learning environment.

The system’s integration at OUM aligns with its commitment to enhancing the quality of learning experiences for its diverse and large student population. AI-AFS transforms feedback, from a delayed and often generic process, into a dynamic and interactive component of learning. Its structured workflows and detailed annotations empower students to continuously refine their assignments, fostering a deeper engagement with the learning process. As part of a pilot initiative, OUM deployed AI-AFS across 12 subjects. representing a broad spectrum of academic disciplines and learner needs.

The AI system leverages structured workflows to provide detailed feedback on various aspects of student assignments, including grammar, structure, argumentation, and referencing. The Writing Feedback platform specifically reviews student work in the following areas:

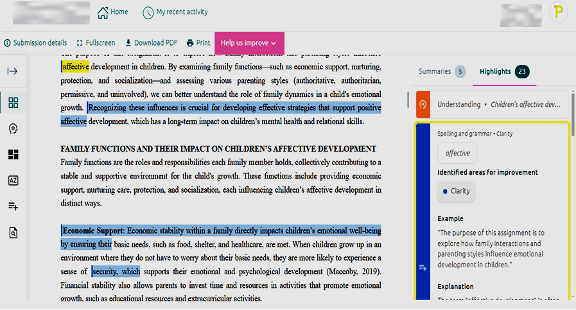

The review report includes both "summaries" and "highlight". "Summaries" provides explanations and condenses the information, offering a clearer understanding or reference for the key points highlighted (see Figure 1). In contrast, "highlight" identifies key points in the text, drawing attention to specific ideas or details (see Figure 2).

AI-assisted feedback is provided through in-text annotations and related comments, supplemented by a comprehensive feedback summary. This system does not directly edit or change the content of student scripts. Instead, it highlights and discusses commonly made mistakes, incorporating examples to help students understand and address these issues. This approach empowers students to apply the feedback to their current and future writing tasks, fostering long-term critical-thinking skill development. Feedback on spelling and grammar emphasises patterns of errors within the document, allowing students to recognise systemic and syntax issues in their writing. Identified errors are highlighted and thoroughly explained, with relevant examples provided for clearer understanding.

AI-AFS offers a streamlined process that allows students to actively engage with their assignments before the final submission of their completed coursework or projects and improve their work continuously. Since this system can only assist with written tasks, other assessments such as mid-term examinations, audio or video presentations, and online class participation are not included. Engaging with AI-AFS multiple times before the final submission deadline is crucial, as it helps students stay on track and monitor their learning progress effectively.

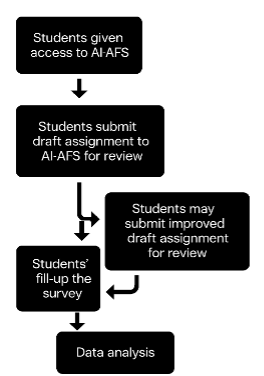

The following steps outline the typical workflow for students using the AI-AFS system:

The system was utilised by the ODL students enrolled in 12 different first-semester courses over a 14-week semester. The effectiveness of the system was evaluated using a quantitative approach, as detailed in Figure 3.

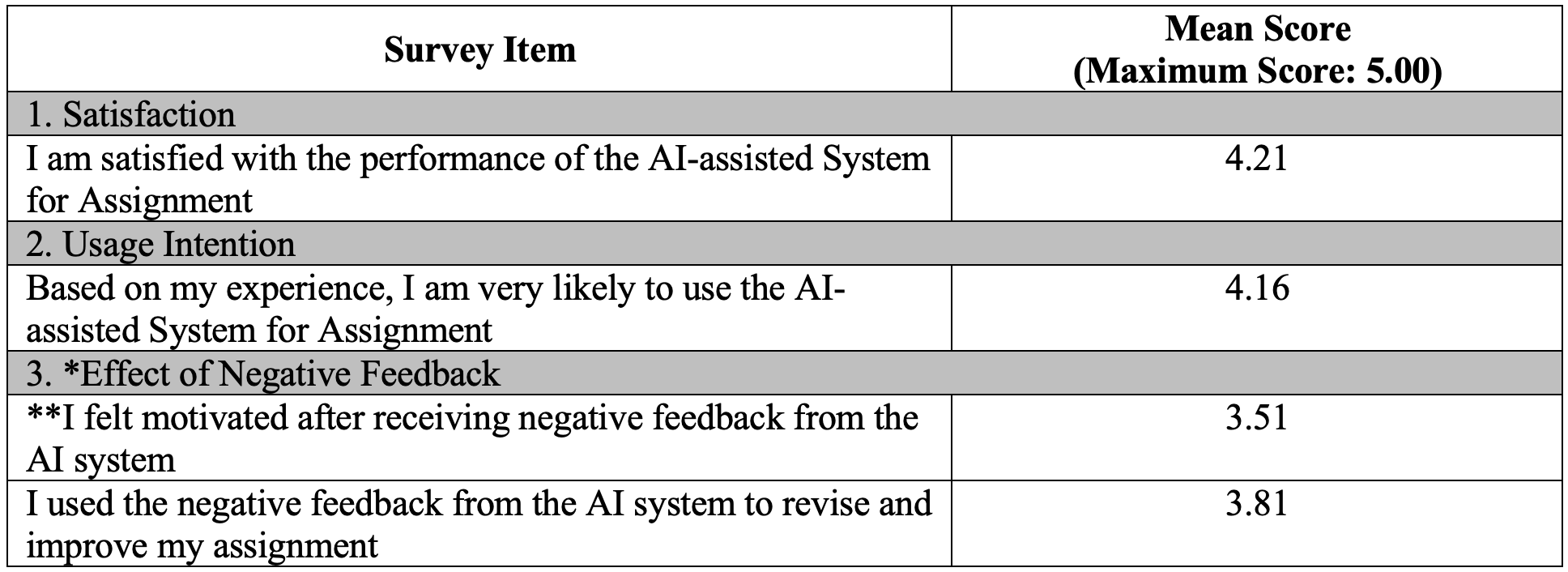

At the end of the semester, students were invited to complete an online survey measuring their satisfaction, intention to continue using the system, and their response to receiving negative feedback. Responses were recorded through a quantitative approach using a five-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree). A total of 351 students completed the survey, representing 28.2% of the total system users. Of the respondents, 78.3% were female and 21.7% male, with an average age of 31.3 years. In terms of academic level, 57.3% were postgraduate students, while 42.7% were enrolled in undergraduate programmes. On average, each student had two interactions with the AI-assisted system. The survey outcome is shown in Table 1.

Table 1: Survey Results on Student Satisfaction, Usage Intention and Responses to Negative Feedback

*Negative feedback refers to constructive input that points out errors or areas needing improvement, such as issues with language structure, writing style, critical thinking, language use, spelling and grammar.

**The original question was negatively worded, where higher scores reflected more negative attitudes. To align with the positive phrasing used in the analysis, this question was reworded to be positive, and the values were reverse-coded.

The findings indicate a generally positive reception of the AI-assisted System for Assignment among learners. High levels of satisfaction were reported, with a mean score of 4.21, suggesting that students were pleased with the system’s performance and perceived it as a valuable tool in their learning process. This satisfaction is further reinforced by a strong intention to reuse the system in the future, with a mean score of 4.16, reflecting learners' confidence in the system's usefulness and effectiveness.

Regarding the system's handling of negative feedback, the results present a nuanced view. The mean score of 3.51 for the statement ”I felt motivated after receiving negative feedback from the AI system” indicates that, on average, students did not feel significantly demotivated by the feedback. This was complemented by a relatively high score of 3.81 for the item "I used the negative feedback from the AI system to revise and improve my assignment", indicating that students generally responded to critical feedback in a constructive manner. Although a study by Er (2024) indicates that instructor feedback is perceived as more useful compared to AI-generated feedback, the present study demonstrates that AI-based feedback can still play a meaningful role in promoting student engagement and improvement—particularly by offering immediate, accessible, and iterative support in writing-based assignments within the ODL environment.

These findings imply that the AI system not only supported learner satisfaction and intention to use but also encouraged adaptive responses to feedback, fostering a growth-oriented learning mindset.

The implementation of this system highlighted several key insights into both students’ and instructors’ receptiveness to a new teaching and learning approach as well as the integration of technology into their learning process.

The key lessons are as follows:

Lesson #1: Learners’ Acceptance of AI-Generated Feedback

Learners displayed a strong level of acceptance of AI-generated feedback, appreciating its ability in providing immediate and actionable insights into their work. The receptiveness to this technology reflected a shift in learners’ perceptions, with many viewing AI as a reliable, valuable, and complementary tool for supporting their academic progress. However, it also emphasised the need for AI feedback to be accurate, clear, and contextually relevant in order to maintain trust and maximise its effectiveness.

These lessons highlight the transformative potential of technology in education, emphasising the need for thoughtful implementation that addresses both learners’ needs and academic priorities.

Lesson #2: Reduction in Academic Workload for Providing Feedback

Based on discussions with the academics responsible for subjects that implemented the AI-based Assignment Feedback System, the system significantly alleviated the burden of delivering individualised feedback. By automating the feedback process, the system enabled educators to dedicate more time on other essential teaching tasks, such as curriculum design and personalised student support. This reduction in workload highlighted the potential of AI to streamline repetitive tasks, and efficiency without compromising the quality of learning experiences.

It may be noted that future work could prioritise expanding the use of AI-AFS to a broader range of courses, enabling more learners across diverse academic disciplines to benefit from personalised and timely feedback. This expansion will require tailoring AI tools to meet the specific requirements of various subject areas, ensuring relevance and effectiveness. Additionally, efforts should also focus on developing and integrating complementary AI-based systems to support other aspects of the ODL framework at OUM, such as automated grading and adaptive learning pathways. Establishing a robust infrastructure for the seamless integration of these systems while ensuring scalability, user-friendliness, and alignment with OUM’s pedagogical goals will be essential. Continuous evaluation of the impact of these technologies on student outcomes and faculty workload will be vital for guiding their refinement and broader implementation.

Acknowledgment: The authors wish to thank Studiosity Pty. Ltd. (https://www.studiosity.com/) for their generous research grant and for providing access to their software platform through OUM’s Moodle LMS. This support has been instrumental in implementing and exploring the potential of AI-assisted feedback systems at OUM.

Analytikus. (2024). The future of education and AI: Automated assessment and feedback. https://www.analytikus.com/post/the-future-of-education-and-ai-automated-assessment-and-feedbac

Black, P., & Wiliam, D. (1998). Assessment and classroom learning. Assessment in Education: Principles, Policy & Practice, 5(1), 7-74. https://doi.org/10.1080/0969595980050102

Choy, J., & Quek, C. (2016). Modelling relationships between students’ academic achievement and community of inquiry in an online learning environment for a blended course. Australasian Journal of Educational Technology, 32(4). https://doi.org/10.14742/ajet.2500

Er, E., Akçapınar, G., Bayazıt, A., Noroozi, O., & Banihashem, S. (2024). Assessing student perceptions and use of instructor versus AI‐generated feedback. British Journal of Educational Technology. https://doi.org/10.1111/bjet.13558

FlowHunt. (2024). AI-based student feedback. https://www.flowhunt.io/glossary/ai-based-student-feedback/FlowHunt

Guo, K. (2024). EvaluMate: Using AI to support students’ feedback provision in peer assessment for writing. Assessing Writing, 61, 100864. https://doi.org/10.1016/j.asw.2024.100864

Hattie, J., & Timperley, H. (2007). The power of feedback. Review of Educational Research, 77(1), 81-112. https://doi.org/10.3102/003465430298487

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L.B. (2016). Intelligence unleashed: An argument for AI in education. Pearson. https://static.googleusercontent.com/media/edu.google.com/en//pdfs/Intelligence-Unleashed-Publication.pdf

Nguyen, L.T., & Tuamsuk, K. (2022). Digital learning ecosystem at educational institutions: A content analysis of scholarly discourse. Cogent Education, 9(1), 2111033.

Nicol, D.J., & Macfarlane-Dick, D. (2006). Formative assessment and self-regulated learning: A model and seven principles of good feedback practice. Studies in Higher Education, 31(2), 199-218. https://doi.org/10.1080/03075070600572090

Pinto-Llorente, A.M., & Izquierdo-Álvarez, V. (2024). Digital learning ecosystem to enhance formative assessment in second language acquisition in higher education. Sustainability, 16(11), 4687. https://doi.org/10.3390/su16114687

Sadler, D.R. (1989). Formative assessment and the design of instructional systems. Instructional Science, 18(2), 119-144. https://doi.org/10.1007/BF00117714

Shute, V.J. (2008). Focus on formative feedback. Review of Educational Research, 78(1), 153-189. https://doi.org/10.3102/0034654307313795

Zawacki-Richter, O., Marín, V.I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education—Where are the educators? International Journal of Educational Technology in Higher Education, 16(39). https://doi.org/10.1186/s41239-019-0171-0

Author Notes

Nantha Kumar Subramaniam is an academic at the Open University Malaysia (OUM) with over 20 years of experience in computing, specialising in intelligent learning systems, natural language processing, and computer science education. He previously served as Adviser for Technology-Enabled Learning at the Commonwealth of Learning (COL), Canada, and completed a prestigious leadership development program in Japan. Nantha has received numerous awards for his contributions to digital education, including the Best E-Learning Product at the International University Carnival on E-Learning (IUCEL) 2021 and multiple Gold Medals at thenAsian Association of Open Universities (AAOU) conferences for innovations in adaptive and intelligent learning systems. He is currently serving as the Dean of the Faculty of Computing and Analytics at OUM, where he continues to lead initiatives in AI-driven learning, digital innovation, and open and distance education. Email: nanthakumar@oum.edu.my (https://orcid.org/0000-0002-5870-5949)

Santhi Raghavan is a Professor specialising in business studies, training management, organisational management, human resource management, and open and distance education, playing a pivotal role in enhancing the learner experience and technology at Open University Malaysia (OUM). Since joining OUM in 2001 as a tutor, she has held various key leadership roles and currently serves as Vice President/Deputy Vice Chancellor (Learner Experience & Technology). She has conducted postgraduate courses internationally, fostering collaborations in countries such as Bahrain, Yemen, Indonesia, Sri Lanka, and Vietnam, and, in 2024, she was invited as a Visiting Professor to the Arab Open University, Kuwait. Email: santhi@oum.edu.my (https://orcid.org/0009-0009-3364-2513)

Cite as: Subramaniam, N.K., & Raghavan, S. (2025). Integration of an AI-powered feedback system for formative assessment at the Open University Malaysia. Journal of Learning for Development, 12(3), 636-644.