Sireesha Prathigadapa, Morampudi Rama Tulasi Raju and Leela Krishna Ganapavarapu

2026 VOL. 13, No. 1

Abstract: Despite the widespread adoption of digital pedagogical tools in higher education, there is limited empirical evidence regarding how demographic factors influence student perceptions of structured formative assessments in computing education. This study addresses this gap by examining associations between three demographic variables—gender, nationality, and programme of study—and student satisfaction with weekly challenge tests implemented in a computing module. Using a cross-sectional survey design, 198 first-year students at Asia Pacific University completed validated instruments measuring satisfaction with weekly digital assessments. Chi-square analyses revealed no significant associations between satisfaction and either gender (χ² = 8.58, p = .073) or nationality (χ² = 6.29, p = .178), indicating demographic equity in tool perception. Conversely, the programme of study showed a significant association with satisfaction (χ² = 33.27, p = .032), indicating discipline-specific variation in perceived pedagogical value. The findings contribute theoretically by demonstrating that well-designed digital formative assessments can achieve demographic neutrality while requiring programme-sensitive implementation. Practically, results inform inclusive instructional design principles for technology-enabled learning and provide empirical support for context-aware pedagogical strategies. This study advances Sustainable Development Goal 4 by demonstrating the provision of equitable, quality education through data-informed digital pedagogy in computing disciplines.

Keywords: formative assessment, digital pedagogy, demographic equity, computing education, student satisfaction, technology-enabled learning

The global shift toward technology-enhanced learning has fundamentally transformed pedagogical practices in higher education, particularly in computing disciplines, where digital literacy functions both as a learning outcome and a methodological tool (Flores-Vivar & García-Peñalvo, 2023). This transformation aligns closely with Sustainable Development Goal 4 (SDG 4), which emphasises inclusive, equitable, and quality education for all learners (Rad et al., 2022). As digital pedagogies become increasingly embedded in university curricula, attention has shifted from adoption toward understanding how such tools influence student learning experiences and satisfaction—an important indicator of perceived learning value within learning and development education.

Formative assessment has emerged as a central strategy in technology-enhanced learning due to its emphasis on continuous feedback, learning progression, and self-regulated learning. Weekly digital formative assessments, such as challenge tests, operationalise these principles by offering structured, low-stakes opportunities for students to engage iteratively with course content. In computing education, particularly in foundational mathematics modules where abstract concepts often challenge first-year students, such scaffolded approaches are viewed as effective mechanisms for supporting conceptual understanding and easing the transition to tertiary learning (Gheorghe et al., 2023). However, the extent to which these pedagogical tools function equitably across diverse student populations remains an important and underexplored issue.

This study was situated within a Malaysian private university offering multiple undergraduate computing programmes. The Mathematical Concepts for Computing (MCFC) module is a foundational requirement across six programmes and provides a consistent instructional context in which a single pedagogical tool is experienced by students from diverse demographic and academic backgrounds. The module incorporates weekly challenge tests covering core topics such as Logic Algebra, Boolean Algebra, Graph Theory, and Trees—concepts essential for computational thinking and algorithm development. The weekly challenge tests were designed as formative assessments emphasising learning as an iterative process rather than a summative outcome. By providing regular feedback and varied levels of cognitive complexity, these assessments aimed to scaffold learning progression and promote engagement (Anastasopoulou et al., 2024; Galdames-Calderón et al., 2024).

Student satisfaction with the weekly challenge tests served as the dependent variable in this study and was conceptualised as students’ perceptions of learning value, accessibility, and usefulness of the pedagogical tool (Gheorghe et al., 2023). The independent variables include gender, nationality, and programme of study—factors commonly examined in learning development and educational technology research. The conceptual framework underpinning this study assumed that while pedagogical design directly influences satisfaction, demographic and disciplinary characteristics might moderate students’ perceptions of learning tools. Gender and nationality reflect equity and diversity considerations, whereas the programme of study represents disciplinary learning cultures and epistemic alignment. Examining these variables concurrently enables a more comprehensive understanding of how student satisfaction is shaped within a shared pedagogical environment.

Research examining gender differences in technology-enhanced learning reports mixed findings. Several studies indicate no significant gender-based differences in satisfaction when digital tools are well-designed and supported by transparent assessment criteria (Andersen et al., 2020; Hesse et al., 2023). Other research highlights initial differences in technology confidence among female students in STEM disciplines, though these differences often diminish within supportive learning environments (Azizah et al., 2021; Wrigley-Asante et al., 2023). Importantly, such differences are largely attributed to sociocultural factors rather than inherent cognitive abilities (Beroíza-Valenzuela & Salas-Guzmán, 2024). Nationality and cultural background also shape students’ expectations regarding assessment practices. While some studies report cultural variation in responses to digital assessments (Arnold & Versluis, 2019; Fernández-Leyva et al., 2021) others demonstrate that clear structure and consistent feedback can mitigate these differences (Gheorghe et al., 2023; LaForge & Martin, 2022). The programme of study has been identified as a particularly influential factor, with evidence suggesting that students report higher satisfaction when assessment content aligns with disciplinary expectations and perceived professional relevance (Galdames-Calderón et al., 2024; Kwan, 2022).

Despite this growing body of research, several gaps persist. Many studies examine pedagogical tools across multiple courses or institutions, limiting the ability to isolate tool-specific effects. Additionally, demographic variables are often examined independently rather than simultaneously. Finally, computing education research remains dominated by Western contexts, leaving Southeast Asian higher education underrepresented (Airaj, 2022).

This study addresses these gaps by examining student satisfaction with weekly challenge tests within a single foundational computing module across diverse demographic groups in a Malaysian higher education context. The research objective was to investigate whether gender, nationality, and programme of study were associated with students’ satisfaction with the pedagogical tool.

The study addressed the following research questions:

The integration of digital pedagogical tools has fundamentally reshaped teaching and learning practices in higher education, particularly in computing disciplines where technology serves simultaneously as an instructional medium and a learning outcome (Flores-Vivar & García-Peñalvo, 2023). Among these innovations, formative assessment tools that emphasise continuous feedback, iterative learning, and process-oriented evaluation have gained substantial empirical support for enhancing student engagement, metacognitive awareness, and conceptual development (Galdames-Calderón et al., 2024). Weekly challenge tests represent a structured form of digital formative assessment designed to scaffold learning progression across semester-long curricula. Systematic reviews of challenge-based learning highlight the importance of alignment with authentic disciplinary practices, timely and actionable feedback, and appropriate cognitive demand for maximising student motivation and perceived learning value (Galdames-Calderón et al., 2024; Kwan, 2022).

Despite their pedagogical potential, the effectiveness of digital formative assessments is shaped by contextual factors such as disciplinary norms and assessment design. Anastasopoulou et al. (2024) documented significant programme-level variation in satisfaction with digital assessments, noting higher approval among engineering and computing students compared to students in humanities disciplines. Similarly, (Gheorghe et al., 2023) found that design characteristics—such as immediate feedback, varied question formats, and opportunities for multiple attempts—were stronger predictors of satisfaction than demographic variables. These findings suggest that pedagogical effectiveness depends not merely on technology adoption but on how digital tools embody evidence-based instructional principles within specific disciplinary contexts.

Research examining gender differences in satisfaction with digital learning tools has produced mixed findings. Several studies report no significant gender-based differences when tools are well-designed, accessible, and supported by transparent evaluation criteria (Andersen et al., 2020; Hesse et al., 2023). Wrigley-Asante et al. (2023) similarly found no significant gender differences in mathematics achievement among STEM students, challenging persistent stereotypes linking quantitative ability to masculinity. These findings indicate that thoughtfully designed digital assessments might achieve gender equity in both performance and satisfaction.

Other studies highlight more nuanced patterns. Azizah et al. (2021) observed differences in problem-solving approaches, with female students favouring systematic strategies and male students displaying greater comfort with heuristic methods, though both approaches proved equally effective. Large-scale reviews emphasise that sociocultural and contextual factors—including gender norms, self-efficacy beliefs, and classroom climate—play a more substantial role than biological or cognitive differences in shaping gendered educational outcomes (Beroíza-Valenzuela & Salas-Guzmán, 2024; Chan, 2022). Collectively, this literature suggests that gender differences in satisfaction with digital assessments are not inherent and could be mitigated through inclusive pedagogical design.

Cultural background and nationality influence students’ expectations regarding teaching methods and assessment practices (Arnold & Versluis, 2019). While some studies report nationality-based variation in student evaluations of teaching quality (Fernández-Leyva et al., 2021), others demonstrate that pedagogical clarity and consistent feedback can reduce cultural differences in satisfaction (Gheorghe et al., 2023; LaForge & Martin, 2022). These findings suggest that cultural effects may be context-dependent and adaptable rather than deterministic.

The programme of study has emerged as a particularly influential factor shaping satisfaction with digital assessments. Computing education encompasses diverse disciplines with distinct epistemological orientations and mathematical demands (Anastasopoulou et al., 2024). Students in mathematically intensive programmes, such as Computer Science and Cyber Security, often perceive a stronger relevance with foundational mathematics compared to students in applied programmes, such as Multimedia Technology (Kwan, 2022). Galdames-Calderón et al. (2024) found that satisfaction with challenge-based learning varied across disciplines based on perceived authenticity and professional relevance. This literature indicates that programme-level differences in satisfaction reflect legitimate disciplinary variation rather than inequitable tool functioning.

This study adopted a quantitative, cross-sectional survey design to examine associations between demographic variables and student satisfaction with a weekly pedagogical tool. A non-experimental approach was appropriate given that the research aimed to identify relationships rather than infer causality. The study employed Chi-square tests of independence to examine associations between categorical independent variables—gender, nationality, and programme of study—and the categorical dependent variable of student satisfaction. This methodological approach is well established for analysing relationships among categorical variables in educational research contexts (Slater & Hasson, 2025; Wang & Cheng, 2020).

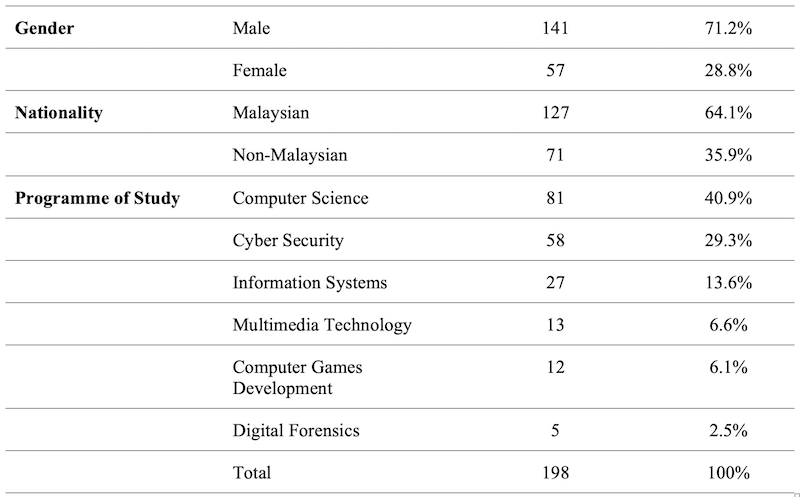

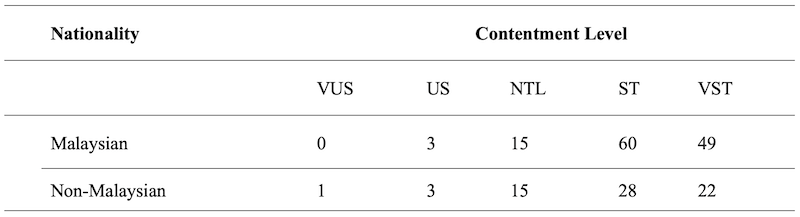

The study was conducted during the second semester of the 2023-2024 academic year at Asia Pacific University (APU), Malaysia. The target population comprised all first-year, second-semester students enrolled in the Mathematical Concepts for Computing (MCFC) module (N = 236). MCFC is a compulsory foundational module for Bachelor of Science (Honours) programmes related to Computing and Technology, covering core topics such as Logic Algebra, Boolean Algebra, Graph Theory, and Trees. A total of 198 students voluntarily participated in the study, representing an 83.9% response rate. This sample size exceeds conventional recommendations for Chi-square analysis and provides sufficient statistical power to detect meaningful associations among categorical variables (Maier et al., 2023; Slater & Hasson, 2025). Participants were drawn from six undergraduate programmes: Computer Science, Cyber Security, Information Systems, Multimedia Technology, Computer Games Development, and Digital Forensics. The sample demonstrated heterogeneity across gender, nationality, and programme of study, providing adequate variability for analysis. Sample characteristics are summarised in Table 1.

Table 1: Sample Demographic Characteristics

Data were collected using a structured questionnaire developed specifically for this study. The instrument consisted of two sections. The first section gathered demographic information, including gender (male/female), nationality (Malaysian/non-Malaysian), and programme of study. Student identification numbers were collected solely for administrative verification and were subsequently de-identified to ensure anonymity. The second section measured student satisfaction using a single global item: “Overall, how satisfied are you with the weekly challenge tests as a learning tool in the MCFC module?” Responses were recorded on a five-point Likert scale ranging from 1 (Very Dissatisfied) to 5 (Very Satisfied). Although multi-item scales are commonly used, methodological research supports the use of single-item measures for clearly defined, unidimensional constructs such as overall satisfaction (Jovanović & Lazić, 2020; Martela & Ryan, 2024). Prior studies reported strong convergent validity between single-item satisfaction measures and multi-item scales (r = 0.60-0.80), while reducing respondent burden and survey fatigue (Jovanović & Lazić, 2020; Spector, 2022). This approach aligns with contemporary practices in educational technology research (Candra & Jeselin, 2022). Content validity was established through expert review by three faculty members specialising in mathematics, computing education, and assessment design, who confirmed that the instrument adequately captured the satisfaction construct. Procedure: Instrument Development, Data Collection, and Analysis

The questionnaire was developed following a review of relevant literature on student satisfaction and digital formative assessment. Data collection occurred during Week 12 of the 14-week semester, after students had completed 11 weekly challenge tests, ensuring that participants had sufficient experience with the pedagogical tool to provide informed responses. Students were invited to participate during scheduled class sessions and accessed the questionnaire via Microsoft Forms using a provided link. Participation was voluntary, and no incentives were offered. The online platform ensured anonymity through system-generated response codes, and students were informed that participation would not affect their academic performance or module grades.

Survey responses were tabulated and analysed using R software (Version 4.3.1). Chi-square tests of independence were conducted to examine associations between demographic variables and satisfaction levels, with statistical significance set at α = 0.05. Cramér’s V was calculated for statistically significant results to assess effect size (0.10 = small, 0.30 = medium, 0.50 = large). All assumptions underlying Chi-square analysis were verified before hypothesis testing.

This section presents the results of the Chi-square analyses conducted to examine associations between selected demographic variables (gender, nationality, and programme of study) and students’ contentment levels with the weekly pedagogical tool.

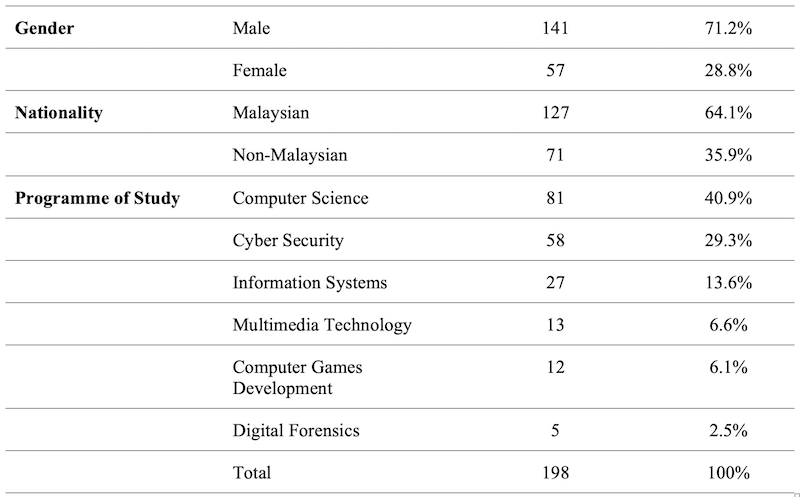

A Chi-square test of independence was conducted to examine whether students’ contentment levels differed by gender. The cross-tabulation of contentment levels by gender is presented in Table 2, which illustrates the distribution of responses across five contentment categories (Very Unsatisfied, Unsatisfied, Neutral, Satisfied, and Very Satisfied) for male and female students. The Chi-square analysis yielded a Chi-square statistic of χ² = 8.5751 with 4 degrees of freedom, and the corresponding p-value was p = 0.07264, which exceeds the conventional significance threshold of 0.05.

These results indicate that there was no statistically significant association between gender and students’ contentment levels. In practical terms, students’ perceptions of the pedagogical tool appeared to be independent of gender, suggesting that male and female students experienced similar levels of contentment within this educational context.

Table 2: Contentment Levels by Gender

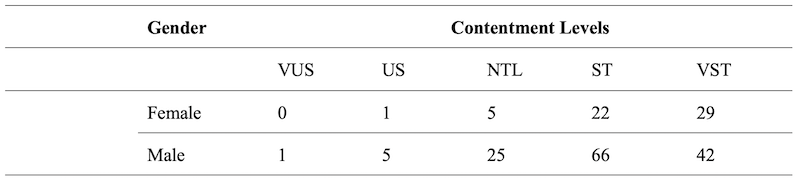

To examine whether students’ nationality was associated with their contentment levels, a Chi-square test of independence was performed. The cross-tabulated results are presented in Table 3, showing the distribution of contentment levels among Malaysian and non-Malaysian students. As shown in Table 3, students from both nationality groups demonstrated broadly similar response patterns, with the majority reporting Satisfied or Very Satisfied contentment levels. The Chi-square analysis produced a Chi-square statistic of χ² = 6.2916 with 4 degrees of freedom, and a p-value of p = 0.1784.

Since the p-value exceeds 0.05, the analysis indicates no statistically significant association between nationality and students’ contentment levels. This finding suggests that, within the scope of this study, nationality did not significantly influence students’ satisfaction with the weekly pedagogical tool.

Table 3: Nationality

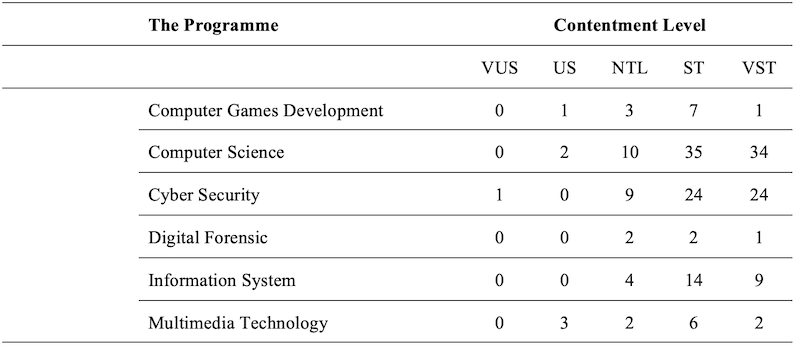

The relationship between students’ programme of study and their contentment levels was examined using a Chi-square test of independence. The cross-tabulated results are presented in Table 4, which displays contentment levels across six computing-related programmes. The analysis revealed a statistically significant association between programme of study and contentment levels. The Chi-square statistic was χ² = 33.271 with 16 degrees of freedom, and the p-value was p = 0.0315, which is below the 0.05 significance threshold. This result indicates that students’ contentment with the pedagogical tool varied significantly across academic programmes. Higher satisfaction levels were observed in mathematically intensive programmes such as Computer Science and Cyber Security, whereas comparatively lower satisfaction levels were evident in more applied programmes such as Multimedia Technology and Computer Games Development.

Table 4: The Programme of Study

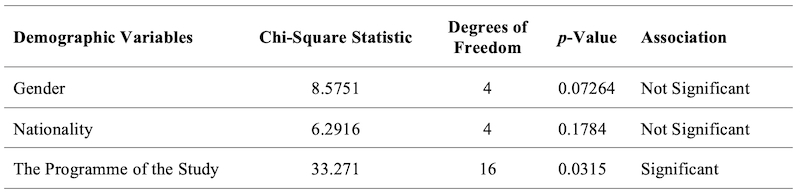

The summary in Table 5 provides a concise overview of the associations between demographic factors (gender, nationality, and the programme of study) and students' contentment levels within the educational context.

Table 5: Association between Demographic Variables and Students’ Contentment Levels

As summarised in Table 5, gender and nationality were not significant predictors of students’ contentment levels, whereas the programme of study showed a significant association. These findings indicate that while the weekly pedagogical tool was perceived similarly across gender and nationality groups, disciplinary context played a meaningful role in shaping students’ contentment. Overall, the results demonstrate that programme-specific factors, rather than demographic characteristics such as gender or nationality, were the primary contributors to variations in student contentment within this educational setting.

This study examined the associations between gender, nationality, and programme of study and students’ contentment with a weekly digital formative assessment tool in a foundational computing mathematics module. The findings demonstrate that while the pedagogical tool functioned equitably across gender and nationality, students’ programme of study significantly influenced contentment levels. These results highlight the importance of both inclusive design and disciplinary alignment in technology-enabled learning environments.

The absence of a statistically significant association between gender and students’ contentment aligns with contemporary research indicating that gender-based differences in digital learning contexts are largely mediated by pedagogical design rather than inherent learner characteristics (Hesse et al., 2023; Wrigley-Asante et al., 2023). As outlined in the introduction, sociocultural and contextual factors play a central role in shaping gendered educational experiences (Beroíza-Valenzuela & Salas-Guzmán, 2024). The structured, low-stakes format of the weekly challenge tests, combined with transparent evaluation criteria and regular feedback, appears to have supported equitable learning experiences across genders. Similarly, nationality was not found to be a significant predictor of contentment. This finding supports previous studies suggesting that pedagogical clarity and consistency can mitigate cultural and linguistic barriers in technology-enhanced learning environments (Arnold & Versluis, 2019; Gheorghe et al., 2023). Within the present context, both Malaysian and non-Malaysian students reported comparable satisfaction levels, indicating that the digital formative assessment functioned inclusively across national backgrounds. From a learning for development perspective, this underscores the potential of technology-enabled pedagogy to promote equitable access and participation in increasingly internationalised higher education systems.

In contrast to gender and nationality, the programme of study demonstrated a statistically significant association with students’ contentment. This result is consistent with the literature emphasising the influence of disciplinary learning cultures and perceived relevance on student engagement (Anastasopoulou et al., 2024; Galdames-Calderón et al., 2024). Students enrolled in mathematically intensive programmes such as Computer Science and Cyber Security reported higher contentment, likely reflecting stronger alignment between assessment content and disciplinary expectations. Conversely, students in more applied programmes might perceive foundational mathematics as less central to their academic trajectories. This finding suggests that while digital assessments can achieve demographic neutrality, programme-sensitive contextualisation remains important for optimising learning experiences.

The findings have important implications for policy and practice in technology-enabled learning. Higher education institutions should adopt universal design principles while also incorporating discipline-relevant examples and explicit relevance framing within digital formative assessments. Such approaches can enhance student contentment without compromising standardisation or scalability, thereby supporting inclusive learning development aligned with SDG 4.

Future research should employ longitudinal and mixed methods approaches to examine how student contentment evolves across programmes and academic progression. Additional variables, such as prior academic preparation and digital self-efficacy, should also be explored to develop a more comprehensive understanding of satisfaction in technology-enabled learning environments.

Airaj, M. (2022). Cloud computing technology and PBL teaching approach for a qualitative education in line with SDG4. Sustainability, 14(23), Article 23. https://doi.org/10.3390/su142315766

Anastasopoulou, E., Konstantina, G., Tsagri, A., Schoina, I., Travlou, C., Mitroyanni, E., & Lyrintzi, T. (2024). The impact of digital technologies on formative assessment and the learning experience. Technium Education and Humanities, 10, 115-126. https://doi.org/10.47577/teh.v10i.12113

Andersen, F.A., Johansen, A-S.B., Søndergaard, J., Andersen, C.M., & Assing Hvidt, E. (2020). Revisiting the trajectory of medical students’ empathy, and impact of gender, specialty preferences and nationality: A systematic review. BMC Medical Education, 20(1), 52. https://doi.org/10.1186/s12909-020-1964-5

Arnold, I.J.M., & Versluis, I. (2019). The influence of cultural values and nationality on student evaluation of teaching. International Journal of Educational Research, 98, 13-24. https://doi.org/10.1016/j.ijer.2019.08.009

Azizah, N., Budiyono, B., & Siswanto, S. (2021). Students’ conceptual understanding in terms of gender differences. Journal of Mathematics and Mathematics Education, 11(1), Article 1. https://doi.org/10.20961/jmme.v11i1.52746

Beroíza-Valenzuela, F., & Salas-Guzmán, N. (2024). STEM and gender gap: A systematic review in WoS, Scopus, and ERIC databases (2012-2022). Frontiers in Education, 9. https://doi.org/10.3389/feduc.2024.1378640

Candra, S., & Jeselin, F.S. (2022). Students’ perspectives on using e-learning applications and technology during the COVID-19 pandemic in Indonesian higher education. Journal of Science and Technology Policy Management, 15(2), 226-243. https://doi.org/10.1108/JSTPM-12-2021-0185

Chan, R.C.H. (2022). A social cognitive perspective on gender disparities in self-efficacy, interest, and aspirations in science, technology, engineering, and mathematics (STEM): The influence of cultural and gender norms. International Journal of STEM Education, 9(1), 37. https://doi.org/10.1186/s40594-022-00352-0

Fernández-Leyva, C., Tomé-Fernández, M., & Ortiz-Marcos, J.M. (2021). Nationality as an influential variable with regard to the social skills and academic success of immigrant students. Education Sciences, 11(10), Article 10. https://doi.org/10.3390/educsci11100605

Flores-Vivar, J-M., & García-Peñalvo, F-J. (2023). Reflexiones sobre la ética, potencialidades y retos de la Inteligencia Artificial en el marco de la Educación de Calidad (ODS4). Comunicar: Revista Científica de Comunicación y Educación, 31(74), 37-47. https://doi.org/10.3916/C74-2023-03

Galdames-Calderón, M., Stavnskær Pedersen, A., & Rodriguez-Gomez, D. (2024). Systematic review: Revisiting challenge-based learning teaching practices in higher education. Education Sciences, 14(9), Article 9. https://doi.org/10.3390/educsci14091008

Gheorghe, C.M., Dumitrescu, A-M., Plămănescu, R., & Albu, M. (2023). Predictors of satisfaction, motivation, and learning engagement for engineering students. 2023 13th International Symposium on Advanced Topics in Electrical Engineering (ATEE), 1-6. https://doi.org/10.1109/ATEE58038.2023.10108252

Hesse, D.W., Ramsey, L.M., Bruner, L.P., Vega-Castillo, C.S., Teshager, D., Hill, J.R., Bond, M.T, Sperr, E.V., Baldwin, A., & Medlock, A.E. (2023). Exploring academic performance of medical students in an integrated hybrid curriculum by gender. Medical Science Educator, 33(2), 353-357. https://doi.org/10.1007/s40670-023-01743-w

Jovanović, V., & Lazić, M. (2020). Is longer always better? A comparison of the validity of single-item versus multiple-item measures of life satisfaction. Applied Research in Quality of Life, 15(3), 675-692. https://doi.org/10.1007/s11482-018-9680-6

Kwan, Y.L.L. (2022). Exploring experiential learning practices to improve students’ understanding. PUPIL: International Journal of Teaching, Education and Learning, 6(1), Article 1. https://doi.org/10.20319/pijtel.2022.61.7289

LaForge, J., & Martin, E.C. (2022). Impact of authentic course-based undergraduate research experiences (CUREs) on student understanding in introductory biology laboratory courses. The American Biology Teacher, 84(3), 137-142. https://doi.org/10.1525/abt.2022.84.3.137

Maier, C., Thatcher, J.B., Grover, V., & Dwivedi, Y.K. (2023). Cross-sectional research: A critical perspective, use cases, and recommendations for IS research. International Journal of Information Management, 70, 102625. https://doi.org/10.1016/j.ijinfomgt.2023.102625

Martela, F., & Ryan, R.M. (2024, July 24). Assessing autonomy, competence, and relatedness briefly. European Journal of Psychological Assessment. https://doi.org/10.1027/1015-5759/a000846

Rad, D., Redeş, A., Roman, A., Ignat, S., Lile, R., Demeter, E., Egerău, A., Dughi, T., Balaş, E., Maier, R., Kiss, C., Torkos, H., & Rad, G. (2022). Pathways to inclusive and equitable quality early childhood education for achieving SDG4 goal—A scoping review. Frontiers in Psychology, 13. https://doi.org/10.3389/fpsyg.2022.955833

Slater, P., & Hasson, F. (2025). Quantitative research designs, hierarchy of evidence and validity. Journal of Psychiatric and Mental Health Nursing, 32(3), 656-660. https://doi.org/10.1111/jpm.13135

Spector, P.E. (2022, May 17). Single-item measures are better than you think. Paul Spector. https://paulspector.com/single-item-measures-are-better-than-you-think/

Wang, X., & Cheng, Z. (2020). Cross-sectional studies: Strengths, weaknesses, and recommendations. CHEST, 158(1), S65-S71. https://doi.org/10.1016/j.chest.2020.03.012

Wrigley-Asante, C., Ackah, C.G., & Frimpong, L.K. (2023). Gender differences in academic performance of students studying Science Technology Engineering and Mathematics (STEM) subjects at the University of Ghana. SN Social Sciences, 3(1), 12. https://doi.org/10.1007/s43545-023-00608-8

Author Notes

Dr Sireesha Prathigadapa is a researcher and academic in the School of Mathematical and Quantitative Sciences (SOMAQS), Asia Pacific University of Technology and Innovation. Her research focuses on AI in mathematics, educational intervention systems, and graph-theoretic applications in personalised learning. She has developed and implemented the Refinery Intervention Treatment (RIT), a structured peer micro-mentoring framework designed to support at-risk students in foundational mathematics courses. Her work integrates discrete mathematics, automation in peer tutoring systems, and innovative assessment design to enhance student performance in computing-related programs. She has published in the area of peer-assisted learning and continues to explore scalable, data-driven educational innovation models. Email: geethikasree@gmail.com (https://orcid.org/0009-0006-1162-8700)

Morampudi Rama Tulasi Raju is a senior IT professional with over 20 years of industry experience in software engineering, systems architecture, and Artificial Intelligence solutions. He has led and developed large-scale technical projects across enterprise and educational domains, including technology-driven student development platforms. His expertise includes AI system design, automation frameworks, and scalable application development. He holds two patents in the field of intelligent systems and continues to focus on applied AI innovations that bridge industry needs and educational transformation. Email: ai.mrtraju@gmail.com (https://orcid.org/0009-0001-3010-3006)

Dr Leela Krishna Ganapavarapu is a distinguished and ratified Associate Professor with over two decades of progressive experience spanning higher education, institutional administration, quality assurance, and industry practice. His expertise lies in academic quality systems, curriculum design, pedagogy enhancement, programme accreditation, compliance documentation, and institutional audit frameworks. As a Certified Quality Control Officer (MQA Tier 1 and Tier 2), he has played a key role in strengthening academic governance and continuous quality improvement initiatives within higher education institutions. Dr. Krishna has authored 13 peer-reviewed research articles published in reputable international journals and actively contributes to the scholarly community as a reviewer for the Journal of Business Economics and the Journal of Accounting and Marketing. His academic interests focus on educational quality assurance, innovative teaching practices, and sustainable institutional development. Email: Leelakrishna999@gmail.com (https://orcid.org/0000-0001-6998-3269)

Cite as: Prathigadapa, S., Raju, M.R.T., & Ganapavarapu, L.K. (2026). Exploring student contentment with weekly pedagogical tool: A demographic analysis in computing. Journal of Learning for Development, 13(1), 186-197.